Most organisations rush to deploy AI and then wonder why it doesn't scale. The ones that get it right build the foundations first (e.g. governance, infrastructure, and clear ownership) before a single model goes to production.

The AI Rush Has a Hidden Cost

There is a pattern playing out across enterprises right now. A business unit sees a competitor launch a GenAI feature. An executive sponsors a proof of concept (PoC). The PoC gets positive feedback.

Eventually someone asks: "Can we productionise this?"

That's the moment organisations discover they don't have the strong foundations to answer “Yes!” for various reasons:

- No clear ownership of the model.

- No audit trail for its outputs.

- No process for what happens when it drifts.

- No way to scale the pattern to the next use case without starting from scratch.

The organisations that are scaling AI successfully in 2026 share one trait: they built a Target Operating Model (TOM) for AI before they had dozens of models in production — not after. This post lays out what that looks like, from governance foundations to the specific pillars that make scaling AI real and tangible.

The Five Layers of an AI TOM

Think of an AI operating model as a stack. Each layer must be solid before the one above it can function reliably. Skip a layer and you'll feel the gap (usually in production), at the worst possible moment.

1. Governance & Risk

Every organisation needs to ask fundamental questions when it comes to leveraging AI: Who owns AI decisions? How are use cases approved? What happens when a model underperforms or produces harmful output? The governance layer solves that.

This layer cuts across everything else — it's not a phase you complete once. It's a permanent, live capability.

2. AI Strategy & Use Case Portfolio

A structured way to decide what to build — and what not to. To make things simple, categorise AI initiatives in three buckets – AI for efficiency, AI for augmentation or AI for enterprise transformation. Prioritise on value versus feasibility.

The trap most organisations fall into is - over-investing in GenAI because it's visible, while neglecting the data quality work that actually determines model performance.

3. Data Infrastructure

A Lakehouse architecture is the current enterprise standard - a unified storage layer accessible to both analytics and AI workloads. Note here that GenAI adds a new first-class concern: vector stores for RAG pipelines.

These are no longer optional; they're as fundamental as your data warehouse.

4. MLOps & AI Engineering Platform

This is the operational backbone. It covers experiment tracking, model registries, CI/CD pipelines, drift monitoring, and feedback loops.

For GenAI, extend this to LLM operations (or simply LLMOps - the set of practices, tools and processes for developing, deploying, and maintaining Large Language Model applications in production): prompt versioning, evaluation frameworks, guardrails, and token cost management elements come into play here.

For agentic AI, consider adding stateful orchestration, observability and guardrails (including circuit breakers) and human escalation paths (Human-In-The-Loop).

5. People, Roles & Ways of Working

Technology choices are secondary to whether the organisation can sustain the model. The most mature pattern is a federated Centre-of-Excellence (CoE): a central team owns standards, platforms, and reusable components while domain-embedded squads own use case delivery.

Avoid going to full extremes such as fully centralised operations (bottlenecks) or fully decentralised (governance gaps) operations. A hybrid model for most organisations is the best approach.

The TOM you build for an organisation standing up their first AI use case is fundamentally different from the one you need when you have thirty models in production. Be explicit about where the organisation is on that maturity curve, that the TOM needs designing for.

Governance is not a Checkbox

Most organisations approach AI governance in a similar way to regulatory compliance like GDPR. The problem with this mindset is it produces checkbox governance that protects no one.

Effective AI governance is built around three structural decisions:

1. Separate accountability clearly

You need three distinct structures, not one.

- An AI Ethics/Risk Board sets policy and owns escalation for high-stakes decisions.

- An AI Centre of Excellence (CoE) sets technical standards and guardrails.

- Product/Domain teams own individual AI assets and their outcomes.

The common failure is letting the CoE make business risk decisions or worse, having no clear owner at all. A word of caution here: even a well-intentioned CoE can become a bottleneck if it never evolves. When every use case must queue for central resource, business units start shadow-building to bypass the wait and eventually, the Governance collapses. The signal to watch for is a growing backlog of AI requests alongside teams hiring their own "AI people" outside the CoE's visibility.

2. Tier your risk

Not all AI presents an equal risk to stakeholders. For example, a GenAI chatbot answering HR FAQs does not belong in the same risk class as an AI Agent executing financial transactions.

To visualise this, build a simple 2×2 matrix of impact severity × autonomy level. Your governance process, approval requirements and monitoring intensity should map directly to that classification.

3. Connect AI Governance to Data Governance

AI Governance and Data Governance cannot operate separately. Data lineage, access controls and quality SLAs are inputs to model reliability and audit readiness. If your data governance is weak, your AI governance has no substance. The EU AI Act, GDPR, and FCA guidance for financial services all assume a level of data traceability that most organisations haven't yet achieved.

Governance is not a layer at the top of the stack. Rather, it's a vertical capability that cuts across every plane of the operating model.

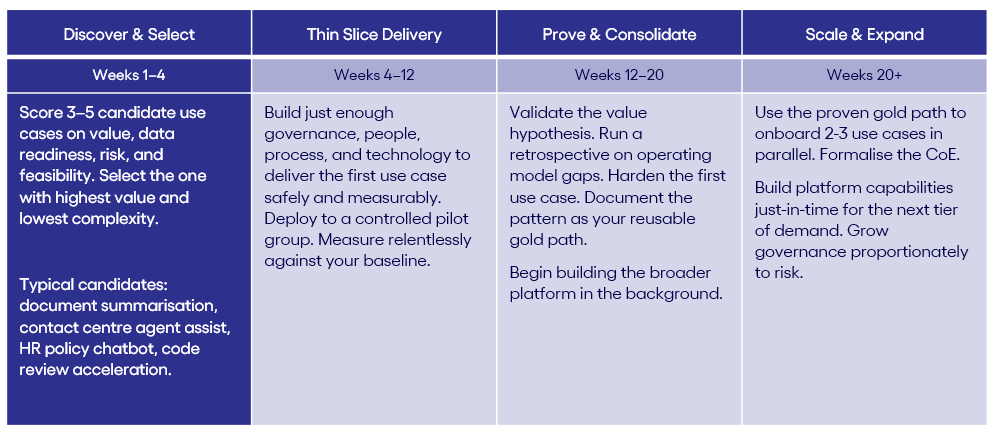

The Golden Path: Do One Thing First and Get It Right

One of the most common failure modes in enterprise AI programmes is trying to build the entire operating model before delivering any value. Organisations spend six to twelve months writing policies, hiring teams, procuring platforms, and running workshops — and emerge with a governance framework but no actual AI capability.

The alternative is the Steel Thread approach: identify one high-value, manageable-risk use case, build the minimum viable operating model to support it, deliver it, prove the value, and then expand on this with the pattern you've proven as your template. This will help industrialise the operating model and ways of working faster and will scale quicker.

Here's how to think about sequencing this: