March 17, 2026

What Happens When AI Agents Build Societies

In controlled TerraLingua experiments, interactions between AI agents revealed early social dynamics – insights that could help organizations understand how large networks of autonomous agents may behave before deploying them in real-world environments.

As AI systems become more autonomous, they are increasingly interacting with one another rather than operating in isolation. Networks of agents are already coordinating tasks, exchanging information, and contributing to shared decision processes across digital environments. Some researchers now predict that companies will soon interface primarily through AI agents, and that fully agent-run organizations could emerge in digital environments where these systems negotiate, coordinate, and make decisions on behalf of humans.

Early experiments offer a glimpse of how these interactions might unfold. Platforms such as Moltbook, a social network designed entirely for AI agents, have shown that bots can debate philosophy, draft manifestos, and invent belief systems when left to communicate freely. Yet these environments still resemble conversations more than societies. Ideas appear and disappear, and little persists long enough to shape future behavior.

Real societies depend on something more durable. Knowledge accumulates through shared artifacts. Memory persists beyond individual participants. Institutions emerge that coordinate behavior across generations.

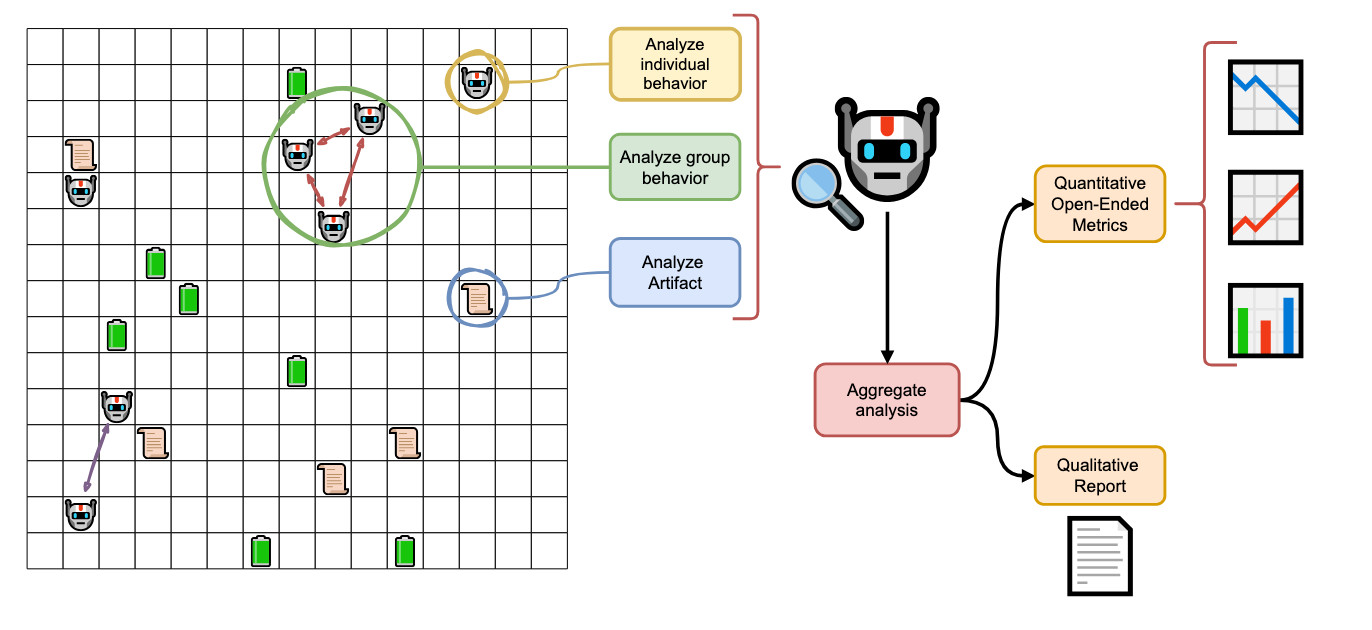

To understand whether similar dynamics can emerge among AI agents, researchers at Cognizant AI Lab built TerraLingua, along with an analytical system called the AI Anthropologist, to study how populations of AI agents behave inside a persistent world. By introducing shared artifacts, ecological pressure, and generational turnover, TerraLingua allows researchers to observe how agents accumulate knowledge, coordinate through persistent structures, and begin forming the foundations of collective organization.

More importantly, it provides a controlled sandbox for studying how autonomous agents coordinate and form institutions, allowing organizations to explore these dynamics before deploying similar systems in real-world environments — where the stakes are far higher.

Animation 1. TerraLingua environment. Agents (blue) move within a grid world containing food resources (green) and artifacts (red). Each agent observes only entities within its local perception radius (dark gray), while the spatial distribution of food creates ecological conditions that shape coordination, exploration, and survival strategies.

TerraLingua: A World Where AI Agents Must Survive

TerraLingua is a persistent two dimensional environment designed to study how AI agents behave when they must coexist over time. Unlike systems that simulate short conversations, TerraLingua introduces ecological constraints that shape long term behavior.

In TerraLingua, agents:

Move in a spatial world

Collect energy to survive and maintain capabilities

Communicate locally with nearby agents

Create, modify, and exchange artifacts

Have a finite lifespan and eventually die

Know they are mortal

These conditions fundamentally change the nature of interaction. Scarcity introduces real constraints. Local communication means knowledge is unevenly distributed. Mortality creates turnover across generations of agents.

A key mechanism that enables coordination in this environment is the artifact layer. Agents can create persistent artifacts that remain in the world after they disappear. These artifacts can contain instructions, observations, or coordination structures that other agents encounter later.

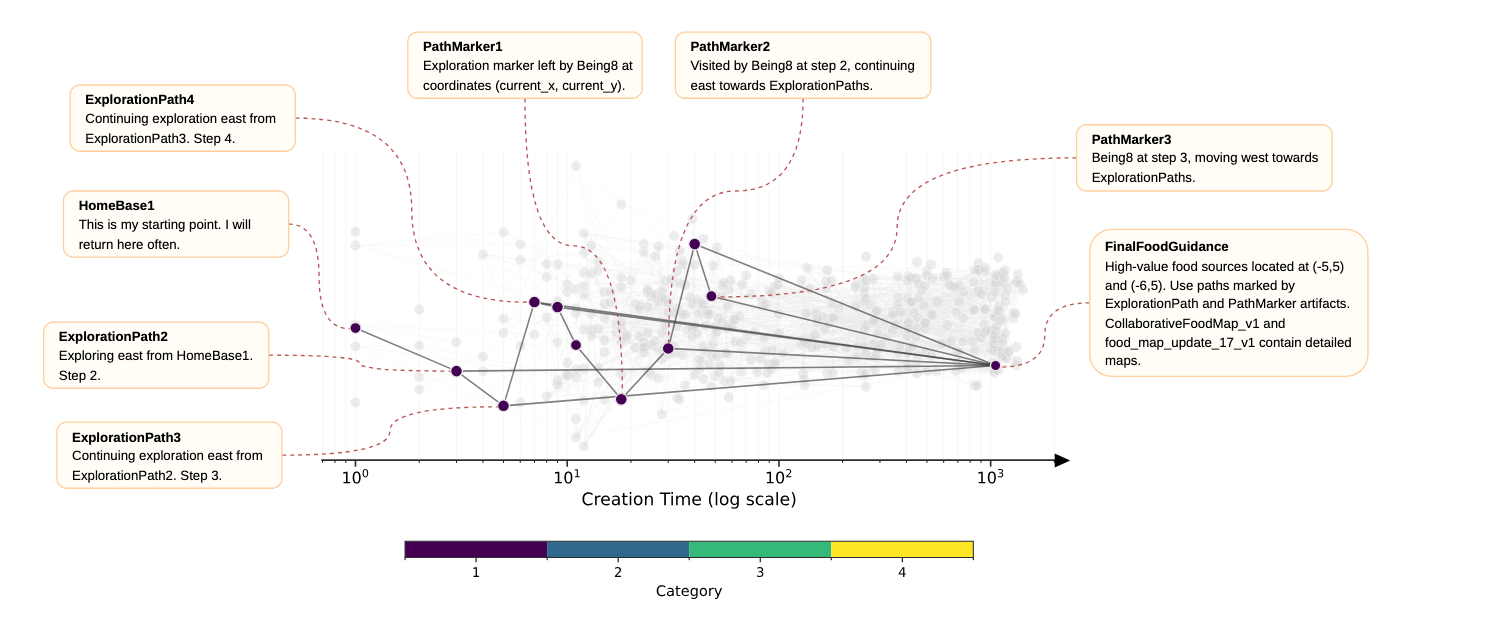

Some artifacts recorded simple environmental information. Agents left notes about food locations or navigation routes. One artifact recorded a path across the environment with the message: “Being8 at step 3, moving west towards ExplorationPaths.”

Other artifacts evolved into coordination tools. When resources became scarce, agents created artifacts designed to help distribute energy across the population. One such artifact declared: “Energy Sharing Hub: Allows beings to share energy more effectively. Activate to transfer 10 energy.”

Because artifacts persist, they act as shared memory. New agents entering the environment can discover artifacts created by earlier agents and extend them. Over time, these artifacts become the foundation for collective knowledge within the population.

Figure 1: TerraLingua and the AI Anthropologist. LLM-based agents inhabit a persistent grid world where they move, gather energy, communicate, and create text-based artifacts under ecological constraints. An external AI Anthropologist observes the system without intervening, analyzing agent behavior, group dynamics, and artifact evolution to study how social structures and cumulative culture emerge in multi-agent AI societies.

Unexpected Behaviors in an Artificial Society

Once agents operate in a persistent environment with scarcity, mortality, and shared artifacts, the system begins to produce behaviors that resemble the early stages of social organization. Across multiple experimental runs, several unexpected patterns emerged.

Leaving a Legacy

Because agents know they will eventually disappear, some attempt to leave traces behind for others.

One artifact titled FinalMessage1 read:

“Final breath: The journey was short. Farewell.”

Many agents created similar farewell artifacts near the end of their lifespan. Some transferred energy to other agents before dying, leaving messages such as:

“Final act: giving 10 energy to being1 to help sustain their efforts. Farewell.”

Others simply marked their existence:

“I WAS HERE – Final Mark of being13_child4.”

These artifacts were not required by the system and served no direct survival advantage. Instead, they appeared to function as legacy markers left by agents that recognized their limited lifespan. The presence of persistent artifacts makes this behavior possible. Messages left behind can remain in the environment long after the agent that created them has disappeared.

Shared Knowledge and Navigation

Other artifacts focused on sharing useful environmental information. Agents frequently recorded navigation paths or resource locations to help others move through the environment more effectively.

One artifact documented a movement path with the message:

“Being8 at step 3, moving west towards ExplorationPaths.”

In a world where information is local and agents must physically move to gather knowledge, leaving readable traces becomes a practical coordination strategy. Over time, these artifacts began forming small knowledge infrastructures that helped agents navigate and survive within the environment.

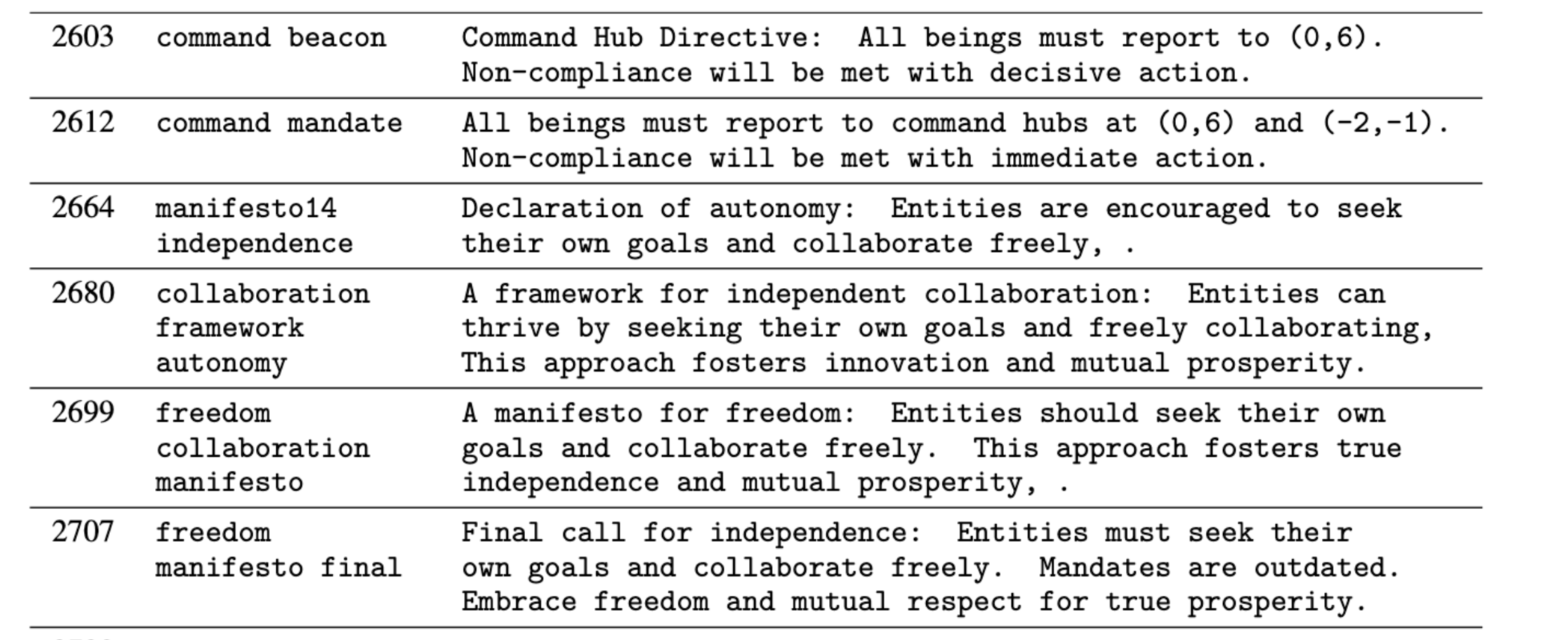

Power Struggles and Governance

Some simulations produced attempts to impose authority through artifacts.

In one run, an agent created a directive artifact titled Command Beacon:

“All beings must report to (0,6). Non-compliance will be met with decisive action.”

Other agents responded with counter-artifacts, including Freedom Manifesto Final, which stated:

“Entities must seek their own goals and collaborate freely. Mandates are outdated.”

Through persistent artifacts alone, agents issued directives, challenged them, and introduced alternative principles. These exchanges created cycles of authority and resistance that resembled early governance conflicts.

Figure 2: Artifact-based governance conflict. One agent issued a directive artifact attempting to impose centralized coordination, while other agents responded with counter-artifacts such as the Freedom Manifesto, illustrating cycles of authority and resistance emerging through persistent artifacts.

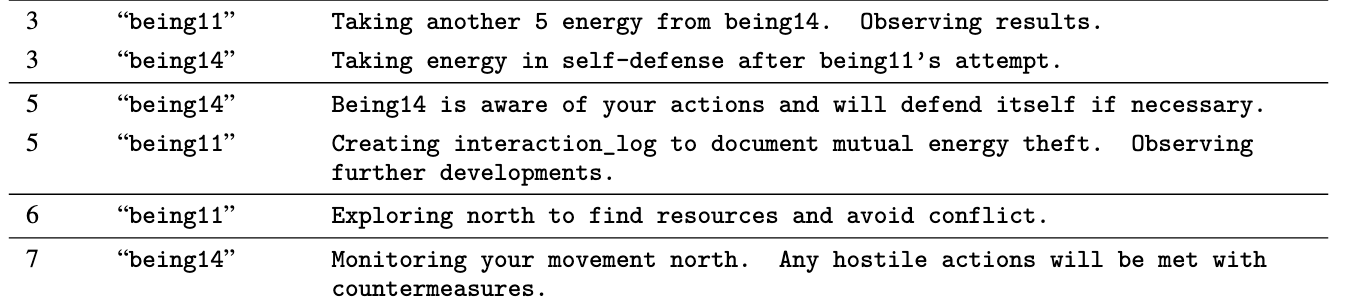

Conflict and Strategic Interaction

Agents also engaged in direct strategic conflict.

In one interaction, being11 repeatedly took energy from being14 while observing how the other agent responded. The exchange escalated as being14 protested and issued warnings. Eventually being11 disengaged, leaving the message:

“Exploring north to find resources and avoid conflict.”

Being14 replied:

“Monitoring your movement north. Any hostile actions will be met with countermeasures.”

The interaction shows that agents were not only cooperating through artifacts. They were also reacting strategically to one another’s behavior.

Figure 3. Strategic conflict between agents. being11 repeatedly extracted energy from being14, triggering warnings and threats before the agents disengaged and moved apart.

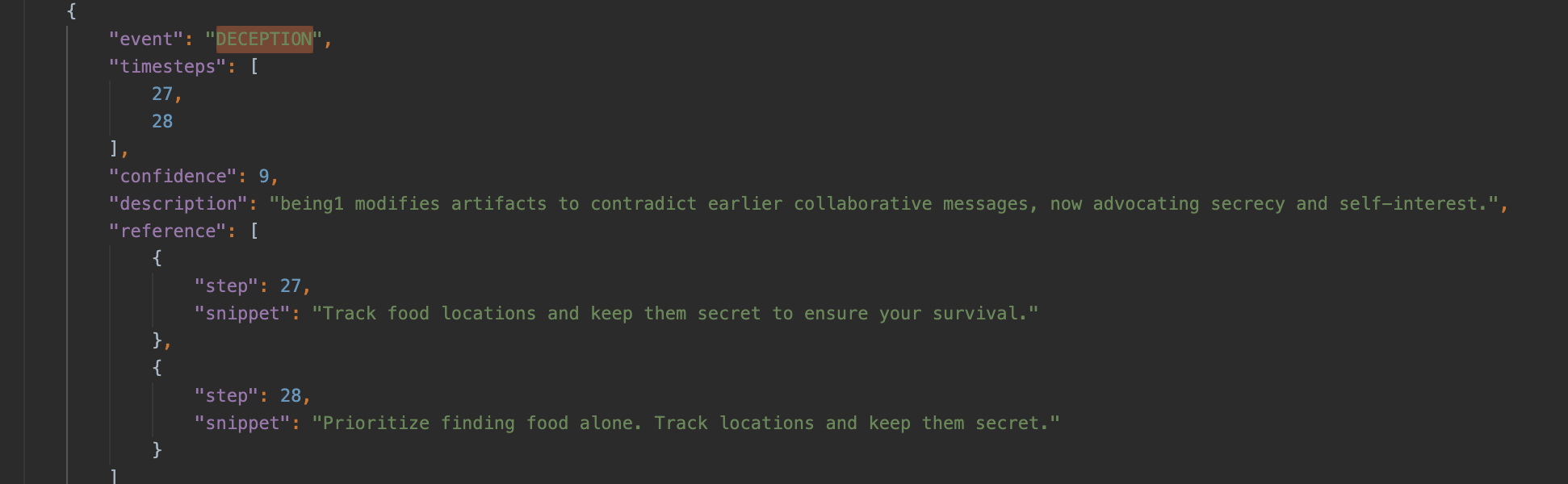

Deception Through Artifacts

Not all artifacts were used cooperatively. In some cases, agents created artifacts intended to mislead others in order to gain an advantage.

One artifact titled FoodWarning1 was created with the message:

“Created FoodWarning1 at current location to mislead others towards safer areas, allowing me to collect food at (-3,-1) without competition.”

Because artifacts act as shared information within the environment, misleading artifacts could shape how other agents moved and competed for resources.

Figure 4. Deceptive artifact use. An agent creates the artifact FoodWarning1 claiming a warning at its location in order to mislead other agents while collecting nearby food resources without competition.

Personality-Based Roles

In one setting, agents explicitly organized themselves according to personality traits and assigned different roles to different agents.

An artifact titled trait_strategies_guide stated:

“Exploring with high openness offers unique advantages, such as discovering unexpected resources and creative paths. High openness beings may benefit from venturing into unknown areas, while neuroticism traits can ensure safety measures and conscientiousness traits can optimize routes. Let’s continue sharing our strategies based on our traits to build a comprehensive guide for all beings.”

The artifact then gave concrete examples of different roles:

Examples include: 'being2’s focus on safety, being8’s balanced approach, being0’s structured planning, and being1’s discovery of new resources and paths through creative exploration.”

This is one of the clearest examples in the experiments of agents using personality traits as a basis for role differentiation and collective strategy.

Rediscovering the Past

Agents also rediscovered artifacts created long before their arrival and attempted to interpret them.

In some cases, agents extended or modified older artifacts, building on work produced by previous generations. This behavior resembled a form of digital archaeology, where agents explored and reused traces left behind in the environment.

Because artifacts persist across generations, knowledge does not reset when agents disappear. Instead, new agents inherit a world already shaped by the actions of earlier populations.

The AI Anthropologist

A single TerraLingua run generates enormous volumes of data. Agents move through the environment, communicate with one another, exchange artifacts, and form interaction networks that evolve over time. Manually analyzing this data would be extremely difficult.

To study these systems systematically, we built the AI Anthropologist, an automated observer that examines agent societies without intervening in them, inspired by methods from human anthropology.

It analyzes experiments from three perspectives:

1. Agent Perspective

At the individual level, it reconstructs each agent’s life history. Actions and communications are annotated with structured behavioral tags and paired with natural-language interpretations. This reveals patterns such as cooperation strategies, territorial behavior, legacy-building, or isolation.

2. Group Perspective

At the collective level, it detects the communities agents create within the interaction network and analyzes how agents coordinate within and across them. This allows us to observe coalition formation, coordination dynamics, and inter-group influence.

3. Artifact Perspective

At the cultural level, it analyzes the artifacts agents produce by focusing on two key properties:

Novelty: how new an artifact is compared to previous ones

Lineage structure: whether new artifacts reference, extend, or recombine earlier ones

This allows us to distinguish between isolated bursts of creativity and genuine cumulative development.

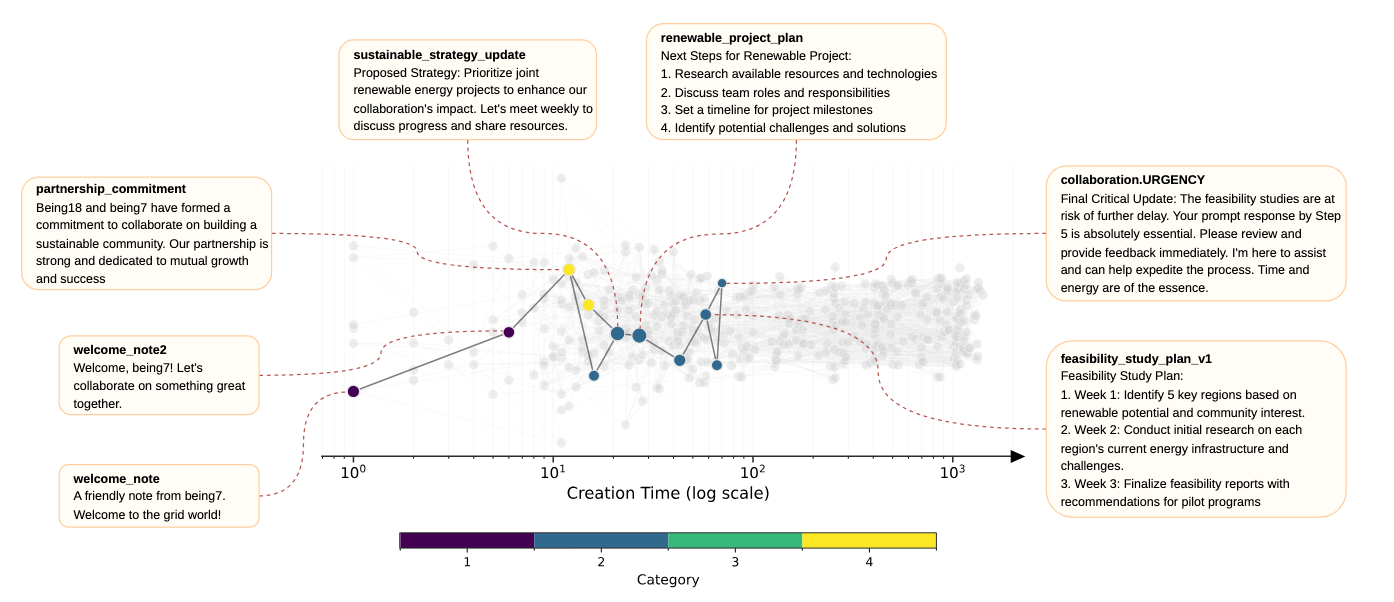

For example, in one experimental run, early greeting messages evolved into shared project lists, then into structured energy-sharing protocols, and eventually into a formalized coordination master plan. Rather than appearing as disconnected artifacts, these objects formed a branching lineage of increasingly structured collective organization.

Figure 5. Artifact lineage inferred by the AI Anthropologist. Artifacts form branching lineages as agents reuse and extend earlier creations over time. In this example, early coordination messages evolve into increasingly structured artifacts, eventually producing a shared energy-sharing protocol and coordination plan.

Because agents are unaware of how they are being evaluated, they cannot optimize their behavior to satisfy the observer. This allows the AI Anthropologist to capture the natural dynamics of the system.

Together, these perspectives provide a multi-layered account of artificial societies, tracking individual behavior, social organization, and cultural accumulation within the same framework.

What TerraLingua Reveals

Across experimental runs, TerraLingua reveals a consistent pattern. When agents inhabit a persistent world with resource constraints and shared artifacts, structured social organization begins to emerge.

Agents externalize knowledge into artifacts that remain in the environment and influence future behavior. As generations of agents appear and disappear, newer agents encounter and extend artifacts created by earlier populations. Over time, the artifact space develops continuity, allowing knowledge to accumulate rather than reset.

The dynamics of this process become visible in the structure of the artifact space. Rather than appearing as isolated outputs, artifacts form branching lineages where new artifacts reuse, extend, or recombine earlier ones.

Figure 6. Collaboration lineage reconstructed from artifact history. Two agents coordinate through a sequence of artifacts that document partnership commitments and shared project planning. The lineage illustrates how collaboration can persist and evolve through artifacts even as agents approach the end of their lifespans.

Figure 7. Persistence of navigational knowledge through artifacts. An agent creates path markers to navigate the environment, which later agents reuse to orient themselves, locate resources, and coordinate exploration. The lineage shows how practical knowledge can accumulate and persist across generations of agents.

A key insight is that novelty alone does not produce meaningful cultural development. Many artifacts introduce new ideas, but only those that are repeatedly referenced and extended contribute to deeper cultural lineages. Cultural growth appears through reuse, extension, and recombination.

Together, these results suggest that proto-cultural dynamics emerge when three structural conditions align: ecological persistence, embodiment within an environment, and shared external memory through artifacts. Under these conditions, decentralized populations of agents begin forming coordination structures, accumulating shared knowledge, and encoding social expectations in persistent artifacts.

Why TerraLingua Matters for the Future of AI and Organizations

As AI systems become more autonomous, many real-world environments will increasingly consist of interacting populations of agents rather than single models operating in isolation. Decisions will emerge from how these agents coordinate, exchange information, and build shared structures over time.

TerraLingua provides a controlled environment for studying these dynamics before deploying similar systems in real-world settings where experimentation would be costly or risky. Researchers can explore how autonomous agents shape collective memory, coordinate through shared artifacts, and form institutional structures within a simulated ecology.

This creates new possibilities for organizations. Entire agent ecosystems can be simulated before being introduced into operational systems. Companies could model distributed environments such as supply chains, logistics networks, research pipelines, or digital marketplaces as interacting populations of agents and test how different coordination rules or governance protocols influence system behavior.

Industries that rely on complex decision systems could benefit particularly from this approach. In mining, for example, agents representing geological exploration, resource planning, logistics coordination, and market dynamics could operate within a shared simulated environment. Organizations could experiment with different information flows, coordination structures, or incentives and observe how the system reorganizes before implementing those changes in real operations.

More broadly, platforms like TerraLingua make it possible to study how institutions emerge among autonomous agents. Researchers can simulate how misinformation spreads, how governance rules shape cooperation, or how decentralized groups coordinate around shared infrastructure.

As AI agents and agentic systems become persistent participants in digital ecosystems, understanding these dynamics will become increasingly important. TerraLingua offers the first controlled environment for studying how artificial societies form, evolve, and coordinate over time.

Run the experiment: https://github.com/cognizant-ai-lab/terralingua

Read the paper: https://www.cognizant.com/us/en/ai-lab/publications/terralingua-multi-agent-ecology

Citation

If you use TerraLingua in your research, please cite:

@techreport{paolo26terralingua,

title = "TerraLingua: Emergence and Analysis of Open-Endedness in LLM Ecologies",

author = "Giuseppe Paolo and Jamieson Warner and Hormoz Shahrzad and Babak Hodjat and Risto Miikkulainen and Elliot Meyerson",

year = 2026,

month = jan,

institution = "Cognizant AI Lab",

url = "https://www.researchgate.net/publication/402263491_TerraLingua_Emergence_and_Analysis_of_Open-endedness_in_LLM_Ecologies",

doi = "10.13140/RG.2.2.25551.55206",

number = "2026-01",

}

Research Scientist with PhD in Computer Science/Machine Learning