April 15, 2026

From Retrieval to Discovery: Rethinking How AI Agents Generate Ideas with the Caesar Framework

A new architecture for AI agents that moves beyond retrieval to enable deeper reasoning, exploration, and truly novel insight.

Key Takeaways

Most AI agents today optimize for retrieval, not discovery, limiting their ability to generate novel insights

Caesar introduces a graph-based architecture that enables associative reasoning across disparate information

Its two-phase design combines deep exploration with adversarial refinement to produce more creative outputs

Experimental results show significant gains in novelty, usefulness, and surprise compared to state-of-the-art agents

This approach signals a shift toward AI systems that support research, innovation, and decision-making at a deeper level

For all the progress in large language models and autonomous agents, most systems today still operate within a fundamentally constrained paradigm.

They retrieve. They summarize. They recombine. But they rarely discover.

Modern agentic frameworks, including retrieval-augmented generation and ReAct-style systems, treat the web as a sequence of disconnected documents. They optimize for precision by finding the most relevant content to a given query, but they do not meaningfully explore beyond it. The result is a kind of structural tunnel vision. Agents repeatedly surface similar information, reinforce existing perspectives, and converge on answers that are correct but often unoriginal.

This limitation becomes more pronounced in open-ended tasks. When the goal shifts from answering a question to generating new ideas, these systems struggle. They lack a representation of how concepts relate to one another across contexts, and they lack a mechanism for deliberately challenging their own conclusions.

Human researchers do not work this way. They build mental maps and follow threads across domains. They test hypotheses, revisit assumptions, and refine their thinking iteratively.

Closing that gap requires more than better prompts or larger models. It requires a different architecture.

Researchers at Cognizant AI Lab developed Caesar, an agentic framework that advances autonomous web search by combining graph-based exploration with adversarial refinement of artifacts. Rather than simply retrieving information, Caesar is designed to explore, connect, and iteratively improve ideas.

This shift matters because many of the most important problems in business and society are not about finding known answers. They are about discovering new ones. Whether in drug discovery, climate modeling, market strategy, or scientific research, the bottleneck is not access to information, but rather the ability to connect it in new ways.

Why Today’s Agents Fall Short

Most modern agents rely on iterative search and retrieval loops: they issue queries, retrieve documents, extract relevant content, and repeat. While this creates the appearance of reasoning, the process remains fundamentally local. Each step is guided by immediate relevance, not by a global understanding of the problem space.

This leads to several persistent issues.

First, agents tend to revisit the same regions of information repeatedly. Without a structured memory of prior exploration, they lack awareness of what has already been covered. This results in redundancy and shallow coverage.

Second, they struggle to connect ideas across domains. Because information is processed in isolation, relationships between concepts are rarely surfaced unless they are explicitly stated in the same source.

Third, they converge too quickly. Once an answer appears plausible, there is little incentive or mechanism to challenge it. This leads to outputs that are coherent but often conventional.

These limitations are not simply implementation details – they are structural. They reflect an architecture designed for retrieval, not for discovery.

Introducing Caesar: A System Designed for Discovery

Caesar was built to address these limitations directly.

At its core, Caesar treats the process of information gathering as a structured exploration problem rather than a sequence of independent retrieval steps. As it navigates the web, it constructs a dynamic knowledge graph that captures relationships between concepts, sources, and intermediate insights.

This graph acts as a persistent memory of the agent’s reasoning process. It allows Caesar to track where it has been, identify unexplored areas, and make decisions based on the broader structure of information rather than immediate relevance alone.

But the system goes further. Caesar introduces an adversarial refinement process that operates on the artifacts it produces. Instead of generating a single answer, the agent creates intermediate outputs and then actively critiques them. It identifies gaps, contradictions, and weak assumptions, and then generates new queries to address those weaknesses.

This creates a feedback loop between exploration and synthesis.

Exploration expands the space of possible ideas, while refinement narrows and strengthens them. Together, these mechanisms allow Caesar to move beyond surface-level synthesis and toward deeper, more structured reasoning.

How Caesar Works in Practice

Caesar’s behavior can be understood as a continuous cycle between two phases: exploration and refinement.

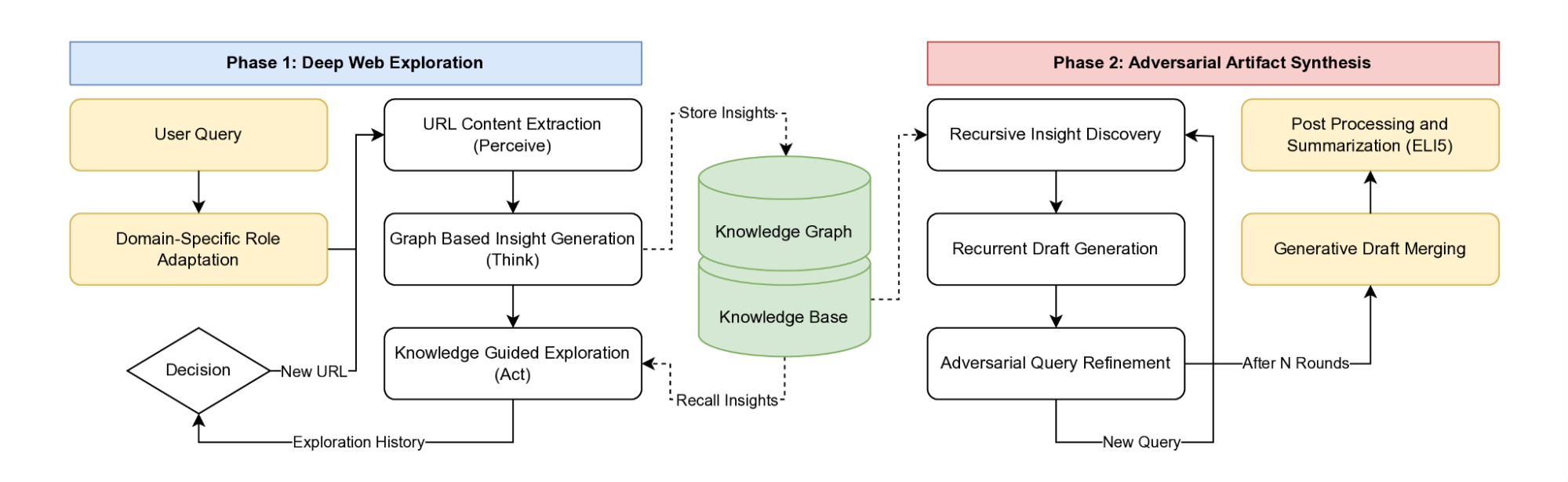

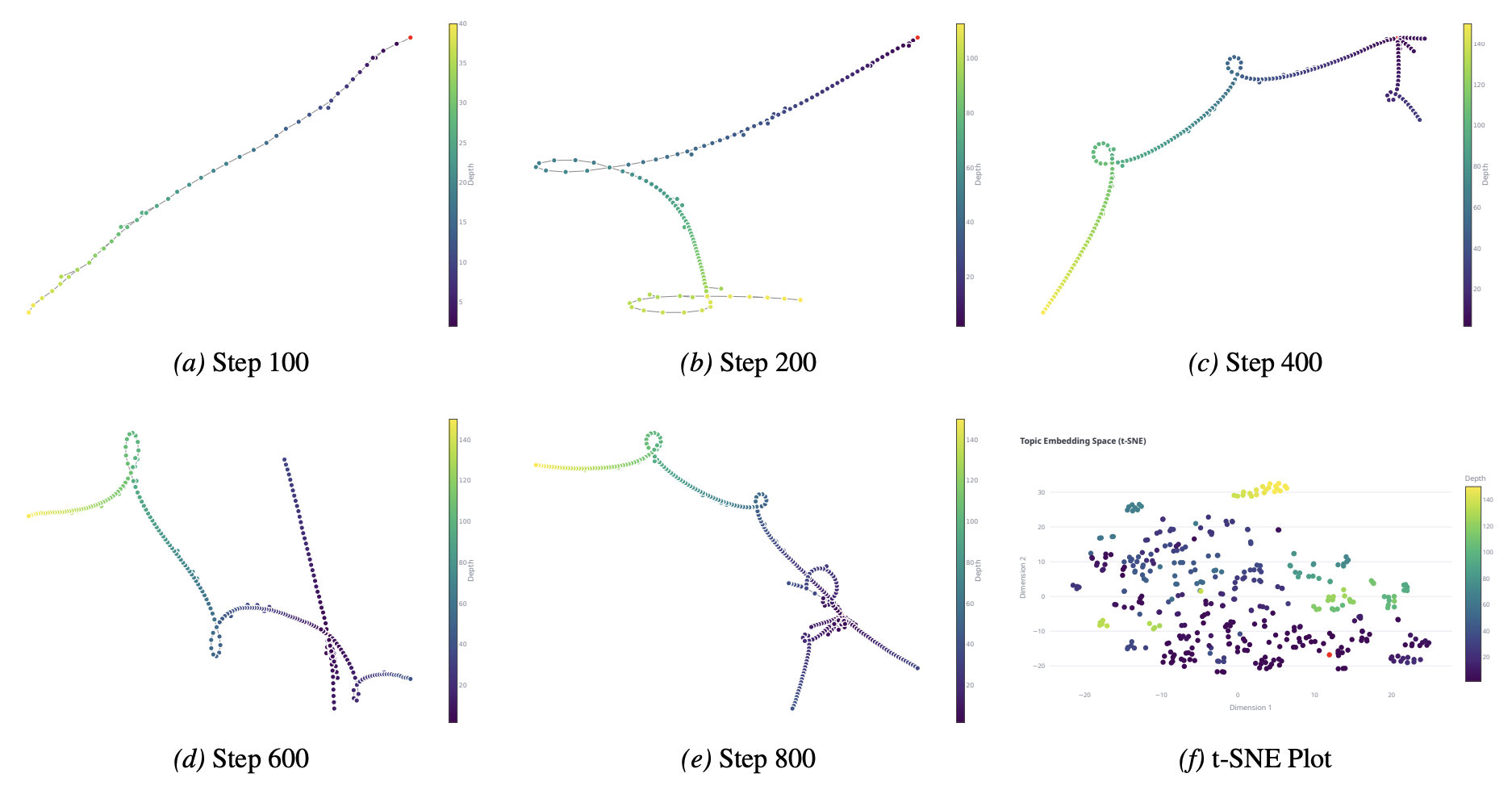

Figure 1: Visualization of the Caesar architecture. The Caesar framework operates through two decoupled components. Phase 1 consists of deep web exploration driven by a dynamic context-aware policy, while Phase 2 relies on an adversarial draft refinement loop that actively seeks novel perspectives rather than confirming established priors.

In the first phase, the agent performs graph-based exploration. It gathers information while simultaneously building a structured representation of how that information connects. Each new piece of data is evaluated in the context of existing nodes, allowing the system to identify reinforcing or conflicting relationships.

This enables a form of associative reasoning that is difficult to achieve with traditional retrieval systems. Instead of simply finding similar information, the agent can surface connections that are structurally meaningful but not immediately obvious.

In the second phase, the system enters iterative refinement.

Here, Caesar generates candidate outputs and then challenges them. It formulates new questions designed to probe weaknesses in its current understanding. These questions drive further exploration, which feeds back into the graph and improves the next iteration of the output.

This process continues until the system converges on a result that is not only coherent, but also robust to critique.

The key insight is that discovery requires iteration. The system must revisit and refine its understanding over time instead of gathering information once.

What the Results Show

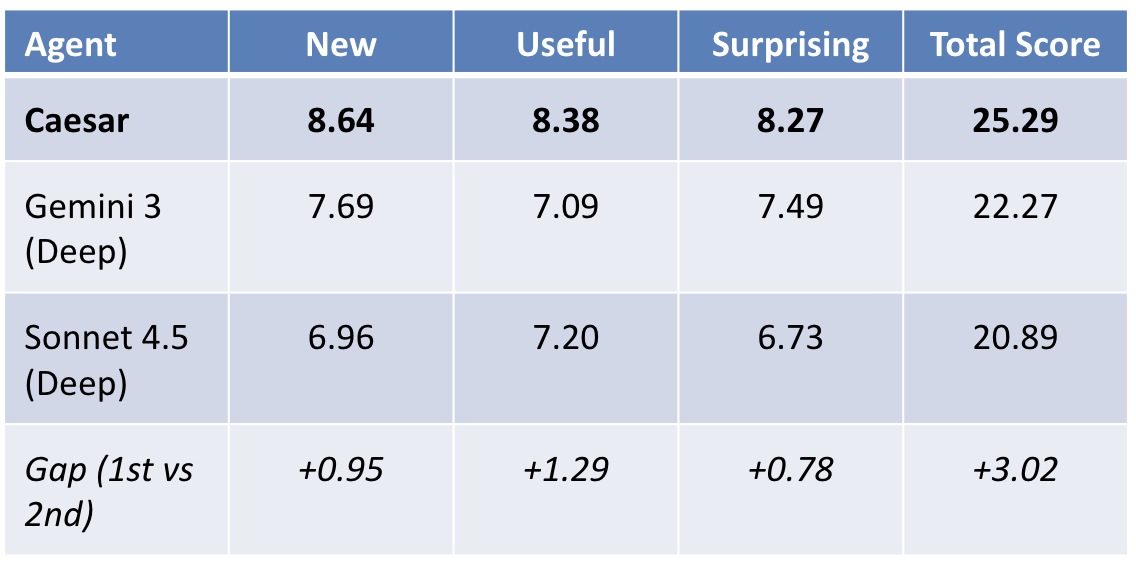

The effectiveness of this approach is reflected in the experimental results. Caesar was evaluated on tasks designed to measure creativity across three dimensions: novelty, usefulness, and the ability to generate surprising connections. Across these metrics, it consistently outperformed leading AI research agents.

Figure 2. Performance on creativity benchmarks. Caesar outperforms state-of-the-art agents across novelty, usefulness, and surprising insight metrics, demonstrating a stronger ability to generate original and meaningful outputs.

The improvements were particularly strong in novelty and surprise, indicating that the system was able to move beyond conventional patterns of reasoning. While many baseline agents tend to converge on safe, widely represented ideas, Caesar more frequently surfaces connections that are less obvious but still meaningful and grounded.

This difference becomes clearer when looking at how the system explores information internally.

Figure 3. Evolution of the knowledge graph during exploration. As exploration progresses, Caesar expands beyond initial query paths, branching into new conceptual areas and revisiting earlier nodes to build deeper, more connected structures.

Rather than following a narrow, linear search trajectory, Caesar dynamically reshapes its exploration over time. It may begin with a focused investigation, but then expands outward, revisiting earlier concepts and linking them to newly discovered information. This allows the system to avoid premature convergence and instead build a richer representation of the problem space.

That structural difference in exploration is what enables more original outputs. By maintaining and expanding a connected graph of ideas, Caesar can identify relationships that would not emerge through isolated retrieval steps.

Importantly, these gains were not dependent on longer outputs or more verbose explanations. Even under constrained conditions, Caesar maintained higher levels of originality. This suggests that the improvements are rooted in the structure of the system, not just in surface-level generation or verbosity.

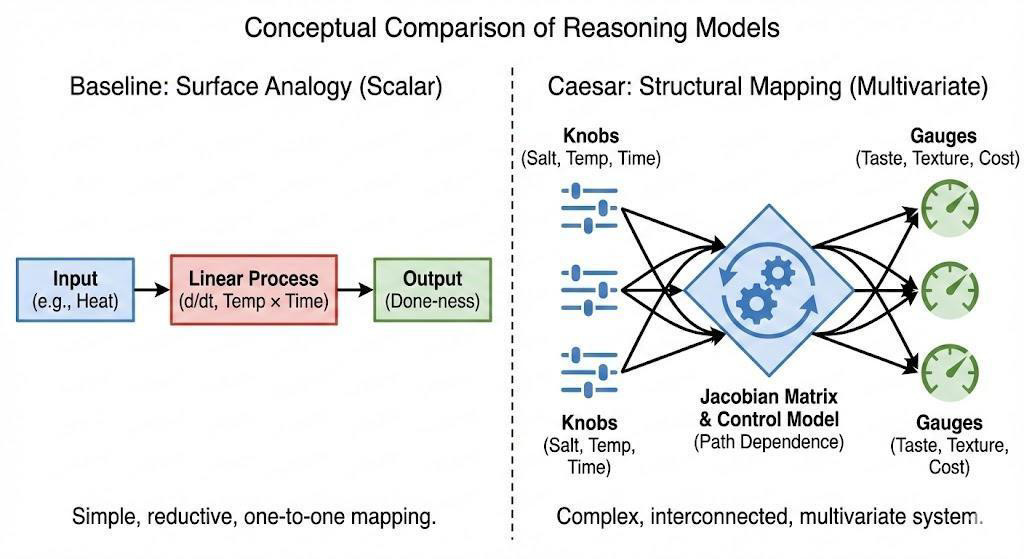

Figure 4. Example of creative synthesis across agents. Compared to baseline agents that produce simpler, more direct responses, Caesar generates multi-layered outputs that integrate concepts across domains, demonstrating deeper synthesis and reasoning.

Qualitatively, this difference appears in the nature of the outputs themselves. Where other agents produce straightforward summaries or single-domain analogies, Caesar more often constructs multi-step explanations that combine ideas from different contexts. This reflects not just better retrieval, but deeper synthesis.

Ablation studies further reinforce this point. Reducing the depth of exploration or removing the refinement loop leads to a measurable decline in performance. When exploration is limited, the system loses access to diverse perspectives. When refinement is removed, outputs become less coherent and less robust to critique. Both components are necessary to achieve the observed gains.

Taken together, these results highlight a broader takeaway: creativity in AI systems is not an emergent property of scale alone. It depends on how systems explore information, how they represent relationships between ideas, and whether they are able to challenge and refine their own conclusions over time.

Why This Matters

Most enterprise AI today is focused on efficiency. It helps organizations automate workflows, reduce costs, and improve access to information. These are important capabilities, but they address only part of the problem.

In many domains, the limiting factor is not speed. It is insight.

Strategic decisions, scientific breakthroughs, and innovation initiatives all depend on the ability to identify patterns that are not immediately visible. They require connecting ideas across domains, exploring alternative hypotheses, and questioning assumptions. This is where current AI systems fall short.

Caesar points toward a different role for AI. Instead of acting solely as a retrieval layer, systems like Caesar can function as partners in discovery, helping organizations explore complex problem spaces more effectively. They can surface non-obvious connections, generate alternative perspectives, and support deeper forms of reasoning.

This has implications across industries. In research, it can accelerate the identification of new hypotheses. In business strategy, it can reveal overlooked risks or opportunities. In product development, it can inspire new combinations of capabilities or approaches.

The broader shift is from using AI to find answers faster to using AI to find better answers.

Ultimately, Caesar represents an early step toward a different class of AI systems that do not just retrieve and summarize knowledge, but actively participate in its creation.

Instead of beginning with a narrow set of inputs, researchers and decision-makers can begin with a richer, more connected understanding of the problem space. From there, human judgment, intuition, and domain expertise can guide the next steps.

As AI systems become more autonomous, the question is no longer just how well they can answer questions. It is whether they can help us discover what we do not yet know.

Caesar suggests that with the right architecture, they can.

Research scientist who specializes in research of LLMs, neuroevolution, evolutionary algorithms, and applications of neural networks