April 22, 2026

Hierarchy-Informed Optimization for Brain Modeling: A Breakthrough in AI and Neuroscience

Nominated for Best Paper in the Real World Applications track at GECCO 2026, this research introduces a structured approach to optimization that turns brain models from data-fitting tools into systems that generalize, predict behavior, and scale to real-world AI challenges.

Key Takeaways

- Complex AI systems, like brain models, are difficult to optimize. Current methods can match the data, but often fail to produce results that hold up across different people or real-world scenarios.

- The core issue is not just model design, but how the model is trained. When optimization is unconstrained, it can find answers that look right but do not capture what is actually going on.

- This research introduces a simple shift: guide the training process using the brain’s natural structure. Instead of learning everything at once, the model builds understanding step by step.

- This approach leads to more stable and reliable models. They not only fit the data, but also generalize better and can predict meaningful outcomes like behavior.

- The idea extends beyond brain modeling. It shows that in complex systems, how you guide the search process can be just as important as the model itself.

Modern AI and computational neuroscience are converging on a common challenge. As models become more realistic, they also become harder to optimize. Whole-brain models aim to capture how activity emerges across many interconnected brain regions, combining structural connectivity with neural dynamics. These systems involve so many interacting parameters, nonlinear behavior, and often lack clear gradients to guide learning.

Evolutionary algorithms are well suited for this setting. They can explore complex search spaces without relying on differentiability, making them a natural fit for large-scale brain modeling. At the same time, their behavior highlights an important challenge.

They are effective at finding solutions that fit data.

However, these solutions do not always generalize well or provide clear explanatory insight.

A model may reproduce the brain activity of an individual subject with high accuracy, yet fail when applied to others. More importantly, it may fail to predict behavior, which is ultimately what these models are meant to capture.

This gap between fitting data and understanding the system is the core problem.

In our latest research “Evolution With Purpose: Hierarchy-Informed Optimization of Whole-Brain Models,” we address this challenge by introducing structure into the optimization process itself. The central idea is simple but consequential: optimization should not be treated as an unconstrained search, but instead reflect the structure of the system being modeled.

By aligning optimization with the brain’s natural hierarchy, we show that it is possible to build models that are not only accurate, but also stable, generalizable, and predictive of behavior.

This work has been recognized with a Best Paper Award nomination (Real World Applications track) at GECCO 2026, underscoring its significance for both research and real-world impact.

Why Whole-Brain Models Fall Short Today

Whole-brain models such as Dynamic Mean Field models are designed to reproduce large-scale patterns of brain activity observed through fMRI. They simulate how interactions between brain regions give rise to functional connectivity, which reflects how different parts of the brain coordinate over time.

To do this, the models rely on many parameters that govern excitation, inhibition, coupling strength, and noise. These parameters interact in complex and nonlinear ways, creating a high-dimensional optimization problem with many possible solutions.

This leads to a well-known issue. Multiple parameter configurations can produce similar outputs. That means a model can appear correct without actually capturing the underlying system.

In practice, evolutionary algorithms often converge to solutions that are highly specific to individual subjects. These solutions can achieve excellent fit, but they are fragile. When evaluated across subjects, or when parameters are averaged, they frequently become unstable or collapse entirely.

So while the model fits the data, it does not generalize. And if it does not generalize, it cannot be used to understand behavior or support real-world applications.

Introducing Structure into the Search

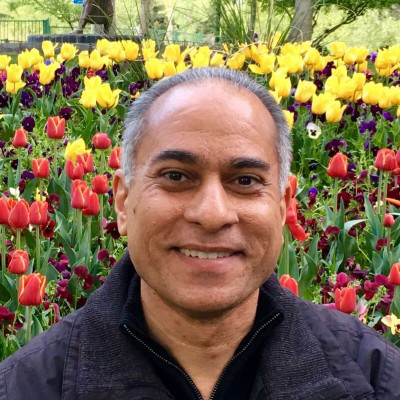

The key idea in this work is to use what we already know about the brain to guide optimization. Cortical organization follows a hierarchy that ranges from unimodal sensory and motor systems to transmodal regions associated with higher-order cognition. This hierarchy reflects how information is processed, from perception to abstract reasoning.

We use this structure to guide optimization through a method called Hierarchy-Informed Curriculum Optimization, or HICO.

Instead of optimizing all parameters at once, HICO introduces them in stages. It begins with global parameters that define overall system behavior. It then progressively introduces parameters associated with higher-order regions, followed by attention networks, and finally sensory and motor systems.

At each phase, only a subset of parameters is allowed to change, while previously learned parameters remain fixed. This creates a structured progression through the search space, where complexity is introduced gradually rather than all at once, analogous to learning from simple to more complex structures.

This approach turns optimization into a curriculum. The model first learns a stable foundation, then builds on it with increasing specificity. Rather than searching everywhere at once, the model is guided toward stable regions first, and only then allowed to become more complex.

The key idea is not to reduce the problem, but to guide how it is solved.

Figure 1: Visualization of the brain’s large-scale organization used to structure optimization. Brain regions are grouped into functional networks and ordered along a hierarchy from sensory systems to higher-level cognitive regions. This hierarchy defines the sequence in which parameters are introduced during training.

What Happens When Structure Guides Optimization

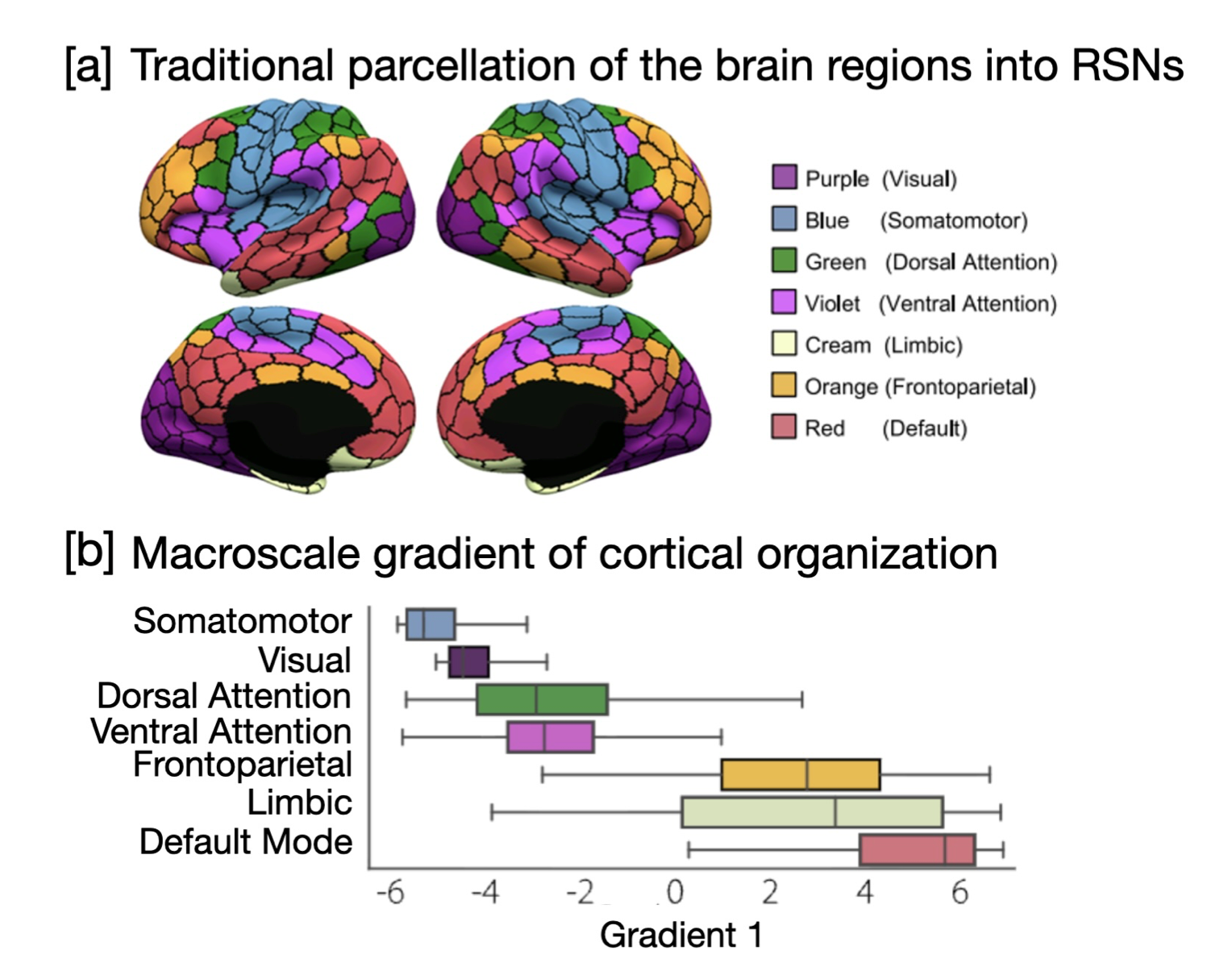

To evaluate this idea, we compared HICO against standard approaches, including a homogeneous model, a flat heterogeneous model, and curriculum variants with reversed or randomized ordering.

Several key findings emerge:

1. Better fit does not mean better models

Allowing different brain regions to have their own parameters improves how well models fit individual subjects. This is expected, as more flexibility allows the model to better match observed data.

However, this improvement is limited to the individual level. These models do not necessarily capture patterns that generalize beyond a single subject. In other words, they can fit the data without learning anything stable about the system itself.

Figure 2: Comparison of model fit across approaches for individual subjects. More flexible models achieve higher accuracy, but this does not reflect how well they generalize.

2. Standard approaches fail to generalize

When these models are evaluated across subjects, the limitations become clear. Homogeneous and flat optimization approaches often collapse when parameters are averaged, producing unstable or unusable solutions.

This reveals a critical failure mode. A model can appear successful during training but fail completely when applied more broadly.

HICO avoids this by guiding the search toward stable regions of the parameter space. As a result, its solutions remain valid even when applied across different individuals.

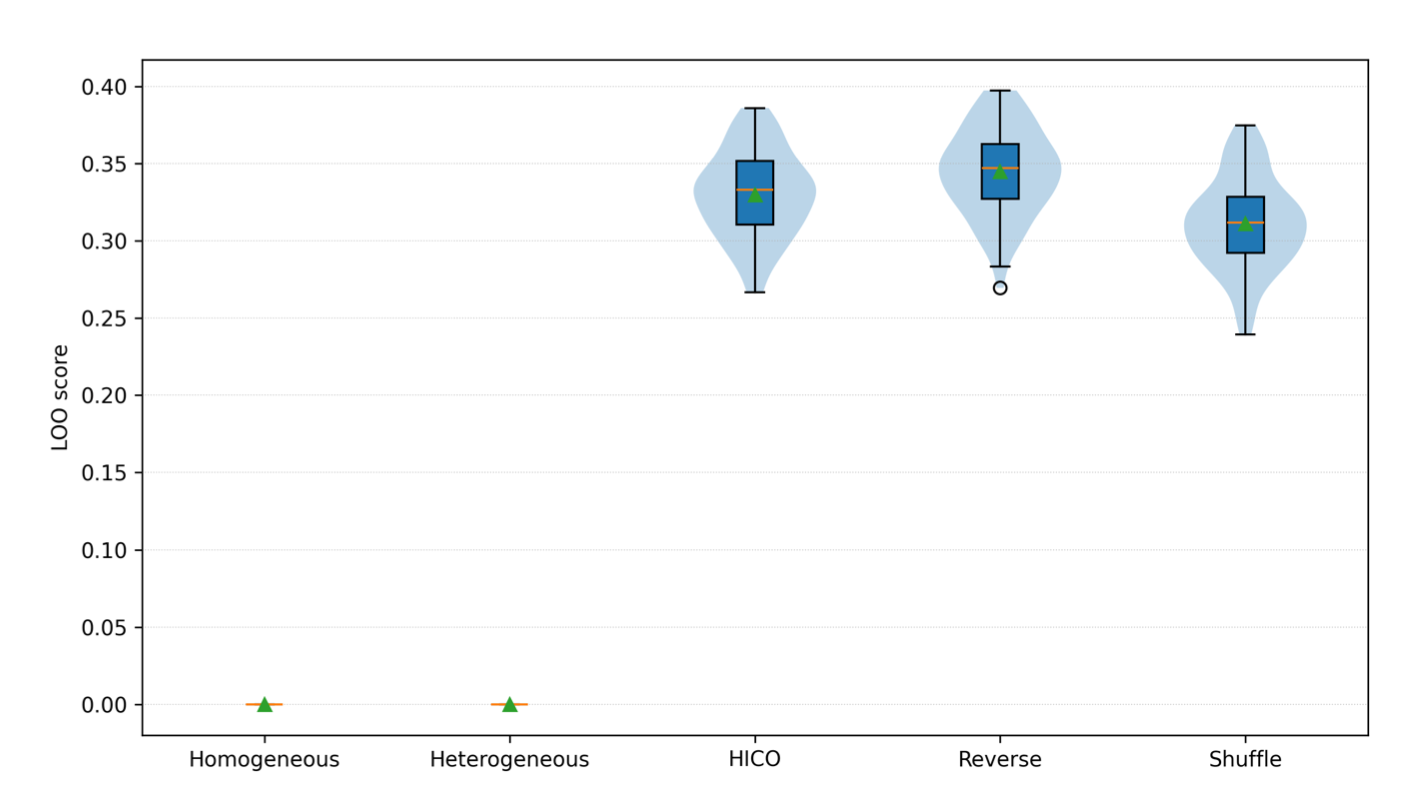

Figure 3: Leave-one-out (LOO) fitness distributions across optimization strategies. Cross-subject evaluation showing that standard methods collapse to unstable solutions, while curriculum-based approaches, especially HICO, remain robust and reliable.

3. The order of optimization is what makes the difference

Not all structured approaches perform equally well. Randomizing the order of parameter updates or reversing the hierarchy reduces performance.

This shows that the benefit does not come from staging alone. It comes from aligning the optimization process with the actual structure of the brain. The ordering acts as a meaningful constraint that shapes the search in a productive way.

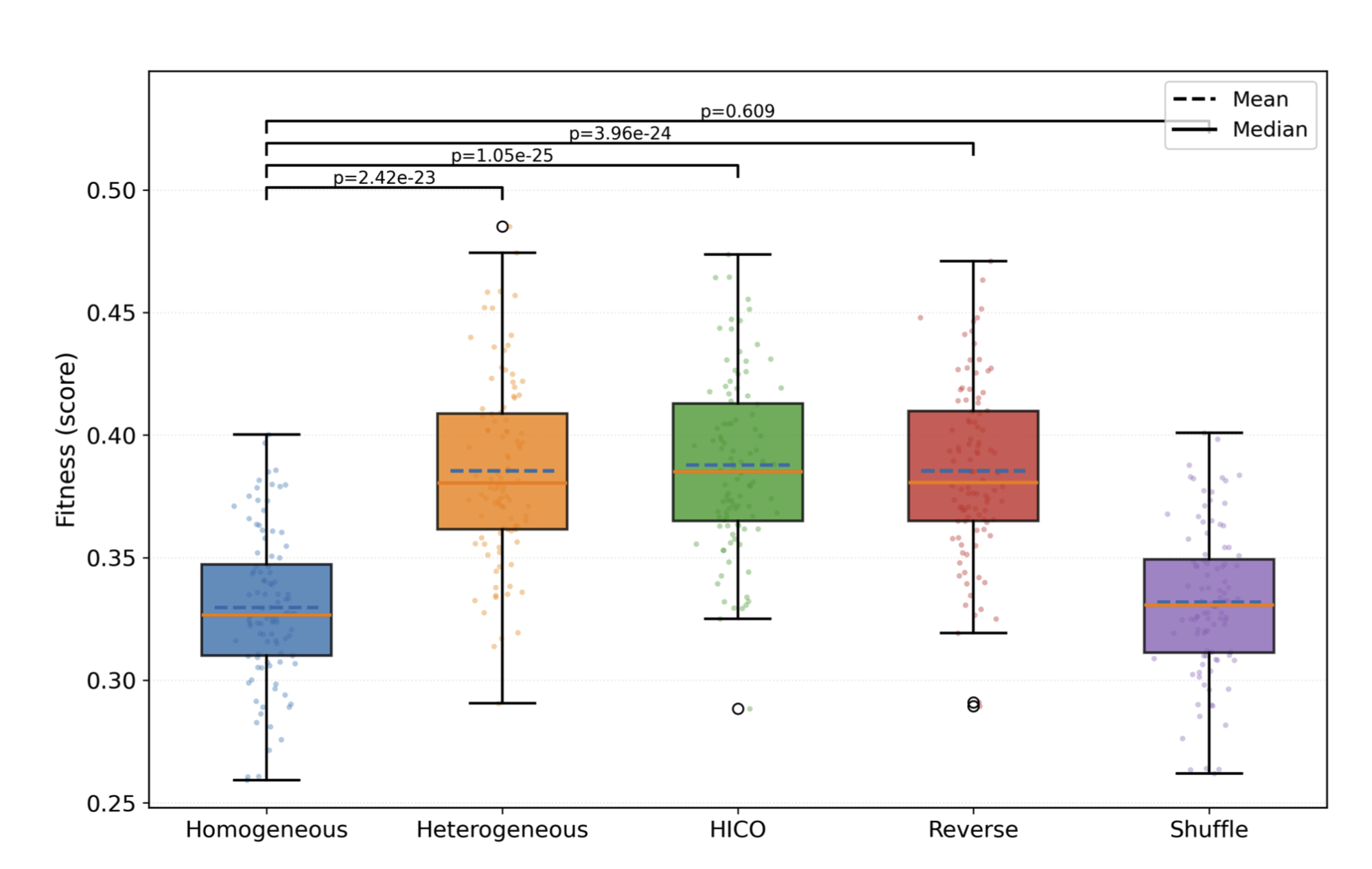

4. Only HICO produces behaviorally meaningful models

This is the most important result.

Even when standard methods fit brain data well, they fail to produce meaningful predictions about behavior. The learned parameters do not capture the underlying factors that drive cognitive or behavioral differences.

HICO changes this. By structuring the optimization process, it produces models whose parameters can reliably predict outcomes such as reasoning ability and behavioral traits. This indicates that the model is capturing something real about how brain dynamics relate to behavior.

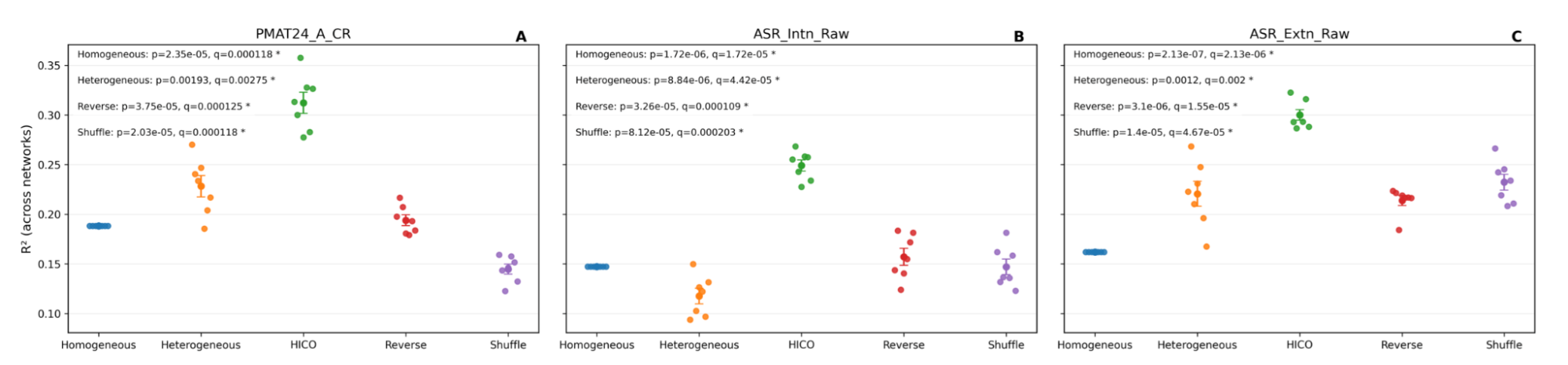

Figure 4: Predicting behavior based on solutions obtained by different optimization strategies. Behavior prediction results showing that only HICO produces models whose parameters consistently relate to cognitive and behavioral outcomes.

5. The search itself becomes more robust

The difference is not just in outcomes, but in how the search unfolds.

Flat optimization methods converge to narrow regions of the parameter space, indicating fragile solutions that are sensitive to small changes. HICO, in contrast, explores a broader and more stable set of solutions.

This broader exploration is what enables generalization. Instead of relying on a single narrow solution, the model identifies regions of the search space that remain valid under variation.

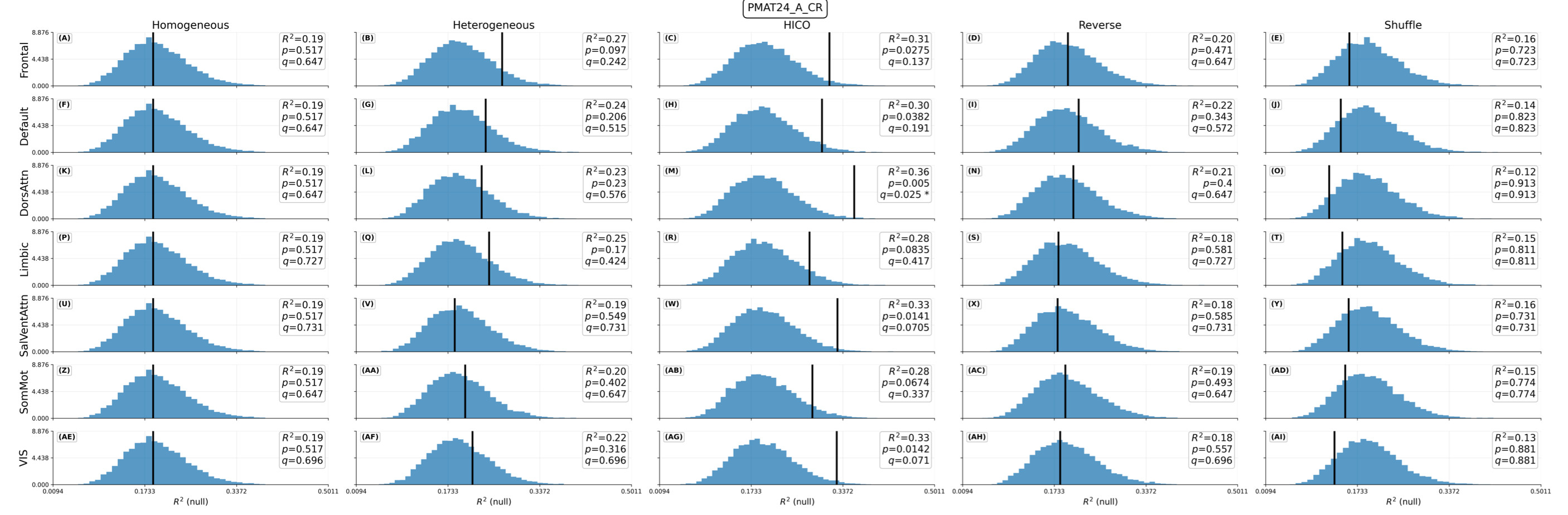

Figure 5. Statistical validation of parameter–behavior relationships across brain networks. HICO consistently outperforms permutation-based baselines, demonstrating that its predictions reflect real and reliable structure rather than chance.

Why This Matters

These findings extend well beyond this specific application.

In neuroscience, improving the generalizability of whole-brain models is a major step forward. It enables a deeper understanding of how brain dynamics relate to cognition and behavior, and allows models to move from fitting individual datasets to capturing patterns that hold across populations.

In healthcare, this opens the door to more reliable predictive models. Whole-brain modeling could support earlier detection of neurological and psychiatric conditions, better prediction of treatment outcomes, and more precise tracking of how interventions affect brain function over time. If these models truly generalize, they move beyond fitting brain data toward real-world use, enabling earlier detection from fMRI and opening the door to more personalized, data-driven treatment decisions.

More broadly, this work highlights a shift in how we approach optimization in AI.

Many real-world systems share the same challenges as brain modeling. They are high-dimensional, nonlinear, and difficult to optimize. In these settings, unconstrained search often produces solutions that appear correct but fail in practice.

What this work demonstrates is that incorporating domain knowledge into the optimization process can fundamentally change that outcome. By structuring how the search unfolds, we can guide algorithms toward solutions that are more stable, more generalizable, and more meaningful.

Looking Forward

Looking forward, this approach can be extended in several important directions. Scaling to larger datasets will enable more precise modeling of individual variability, while applying it to clinical populations could provide new insights into neurological and psychiatric conditions, as well as treatment response. At the same time, combining HICO with scalable evolutionary frameworks such as BLADE creates a path toward tackling even higher-dimensional systems, where traditional optimization methods continue to struggle.

More broadly, this work suggests a shift in how complex systems should be approached. Rather than relying on increasingly large or computationally intensive search processes, there is clear value in guiding optimization with structure that reflects the system itself.

At its core, the takeaway is simple. Optimization is not just about searching harder. It is about searching with structure and purpose.

Hormoz specializes in evolutionary AI and explainable decision-making systems, focusing on scalable optimization techniques and multi-agent problem-solving frameworks.