April 7, 2026

Smarter Chlorination: Using Evolutionary AI to Control Complex Water Systems

New research shows how we improved water infrastructure by optimizing chlorination systems with evolutionary methods, balancing safety, cost, and stability.

Key Takeaways

Maintaining safe chlorine levels in water distribution systems is a complex, multi-objective control problem shaped by nonlinear dynamics, delayed effects, and changing demand.

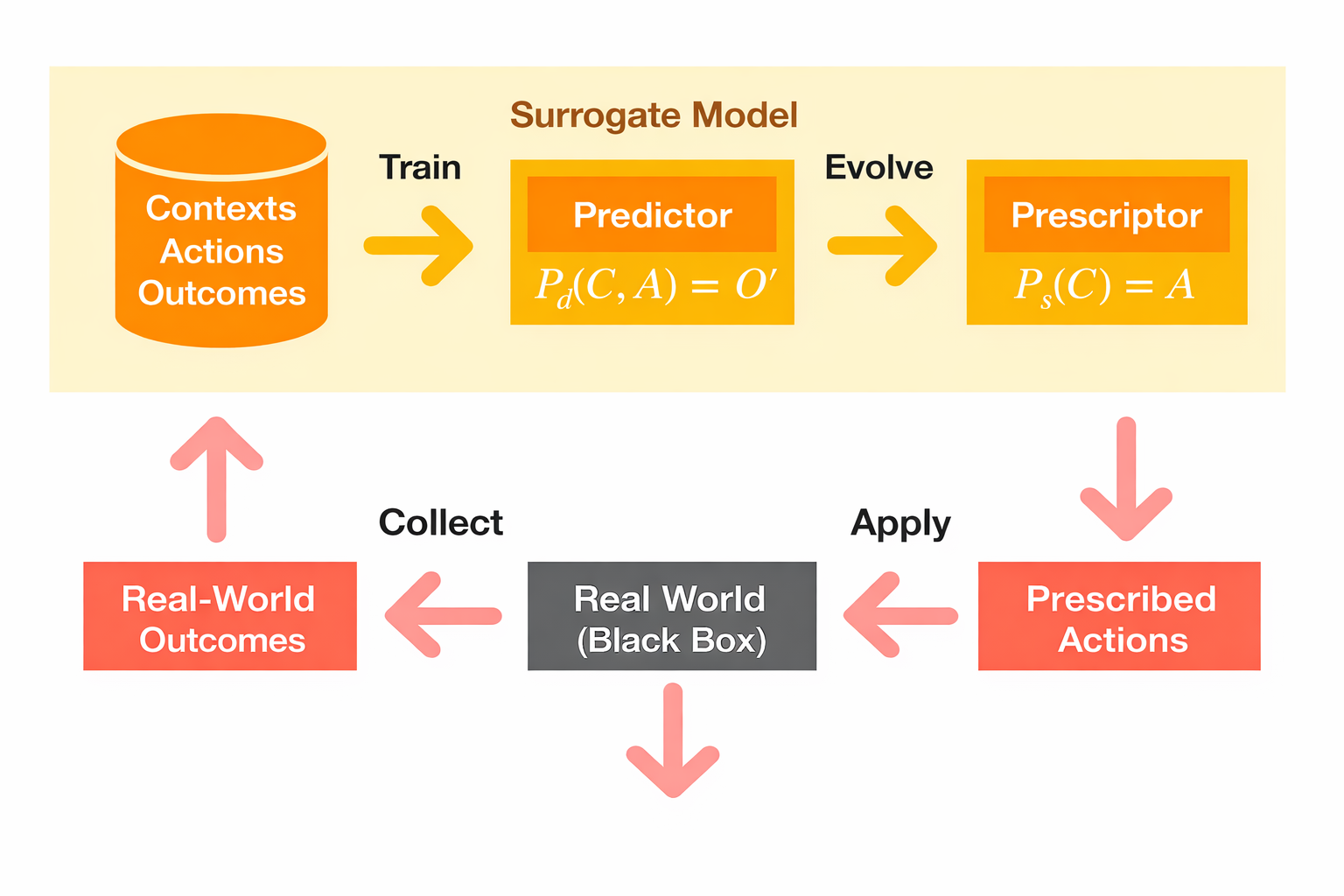

The paper introduces a surrogate-assisted evolutionary framework (ESP) that combines a learned system model, neuroevolution (NEAT), and multi-objective optimization (NSGA-II) in an iterative loop.

A neural network surrogate model is used to approximate the behavior of a computationally expensive simulator, enabling efficient evaluation of control strategies.

Controllers are evolved to optimize multiple competing objectives simultaneously, including safety bounds, spatial consistency, cost, and smoothness of chlorine injection.

The approach produces stronger tradeoffs across objectives than baseline strategies and reinforcement learning (PPO) in this setting.

The framework provides a practical way to optimize complex, high-stakes systems where real-world experimentation is not possible and simulation is computationally expensive.

Access to clean drinking water is one of the most fundamental requirements for modern society, and one of the most difficult systems to get right at scale.

Water distribution networks must deliver safe water to millions of people every day, relying on chlorine as an effective and low-cost disinfectant. However, chlorine must be carefully controlled within a narrow range: too little allows harmful microorganisms to persist, while too much leads to chemical byproducts that have been linked to serious health risks, including cancers and cardiovascular disease.

Maintaining that balance across a large, dynamic network is far from straightforward.

Chlorine levels cannot be set once and left alone. As water moves through the network, changing demand and flow conditions continuously alter how chlorine spreads, decays, and reacts with organic matter. This means the concentration at any given point depends not just on current conditions, but on decisions made earlier and elsewhere in the system.

In practice, this turns chlorination into a continuous, system-wide control problem under uncertainty, where small missteps can have delayed and far-reaching consequences.

Despite decades of research, this problem remains difficult to solve. Traditional control methods rely on simplified assumptions that struggle to capture these dynamics, while reinforcement learning approaches often face instability, noisy objectives, and challenges in balancing competing goals.

In new research from Cognizant AI Lab, we explore a different approach. By combining evolutionary optimization with learned system models, this work introduces a scalable way to design chlorination control strategies that can adapt to complex, real-world conditions. More broadly, it points to a new pathway for improving critical infrastructure systems and supporting the safe, reliable delivery of drinking water at scale.

The Bottleneck: Learning Without Real-World Experimentation

At the heart of this problem is a practical constraint. You cannot experiment directly on a live water system.

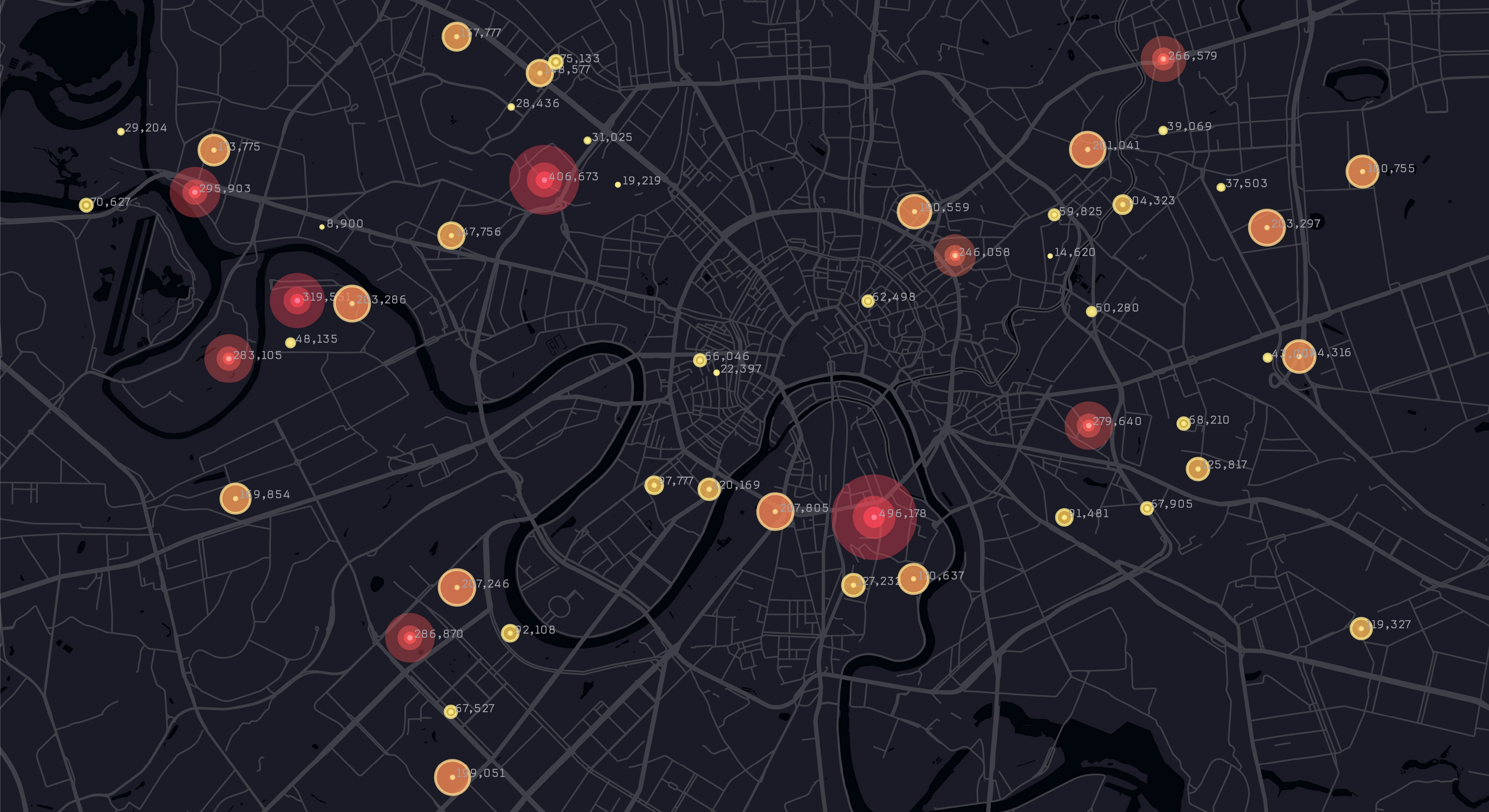

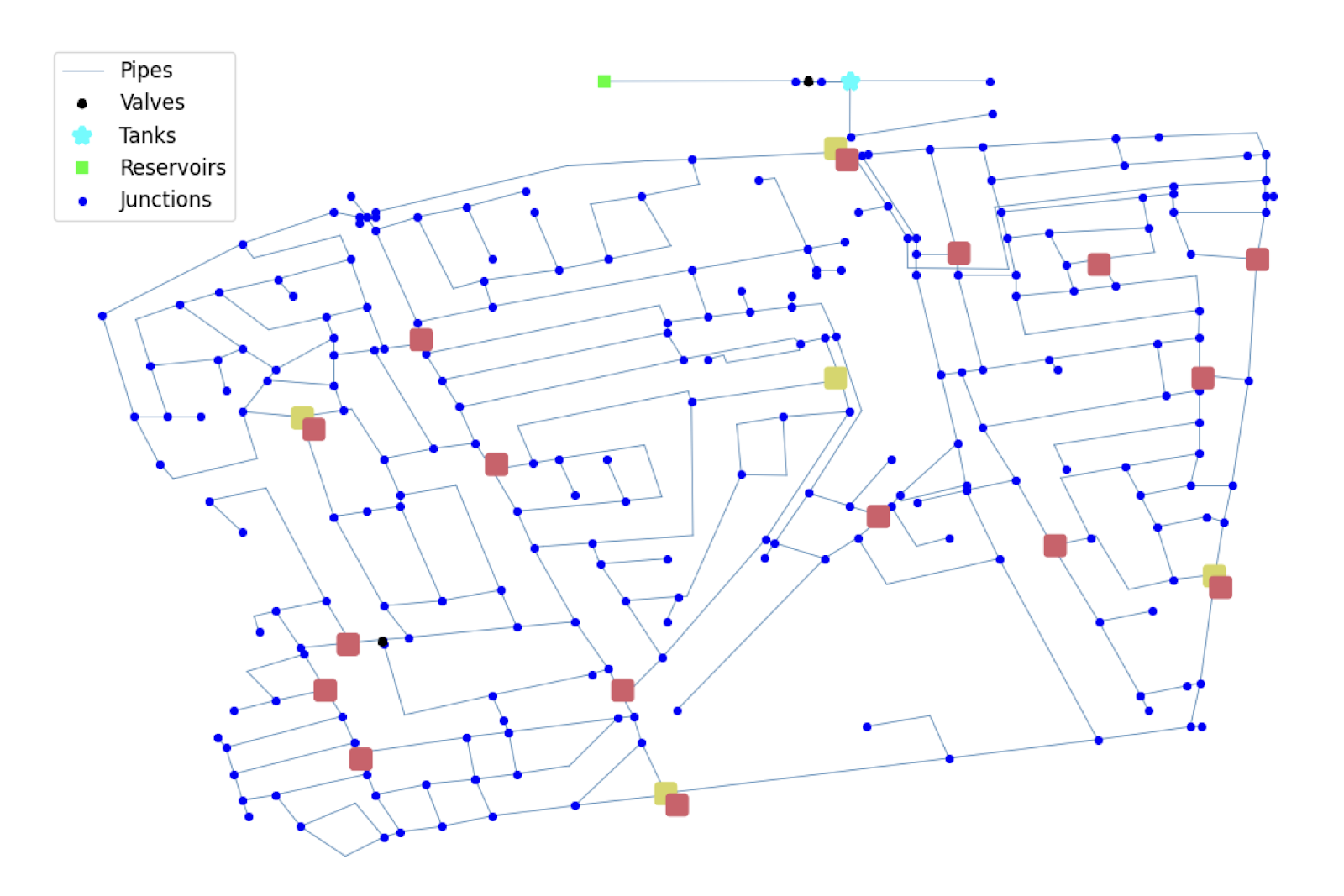

Water distribution networks are critical infrastructure. Testing new control strategies in the real world is not feasible, which means optimization must happen in simulation. Tools like EPANET model the physics and chemistry of water distribution systems with high fidelity, capturing how water flows, how chlorine propagates, and how it reacts over time.

But that accuracy comes at a cost.

These simulations are computationally expensive, especially when used repeatedly to evaluate different control strategies. Learning an effective policy requires exploring many possible decisions across long time horizons, and each evaluation adds significant overhead.

This creates a fundamental bottleneck. The system we need to learn from is too slow to support large-scale learning.

The Approach: A Learning Loop Built on Evolution and Simulation

To address this, the paper introduces a unified framework built as an iterative learning loop, combining a learned model of the system with evolutionary optimization.

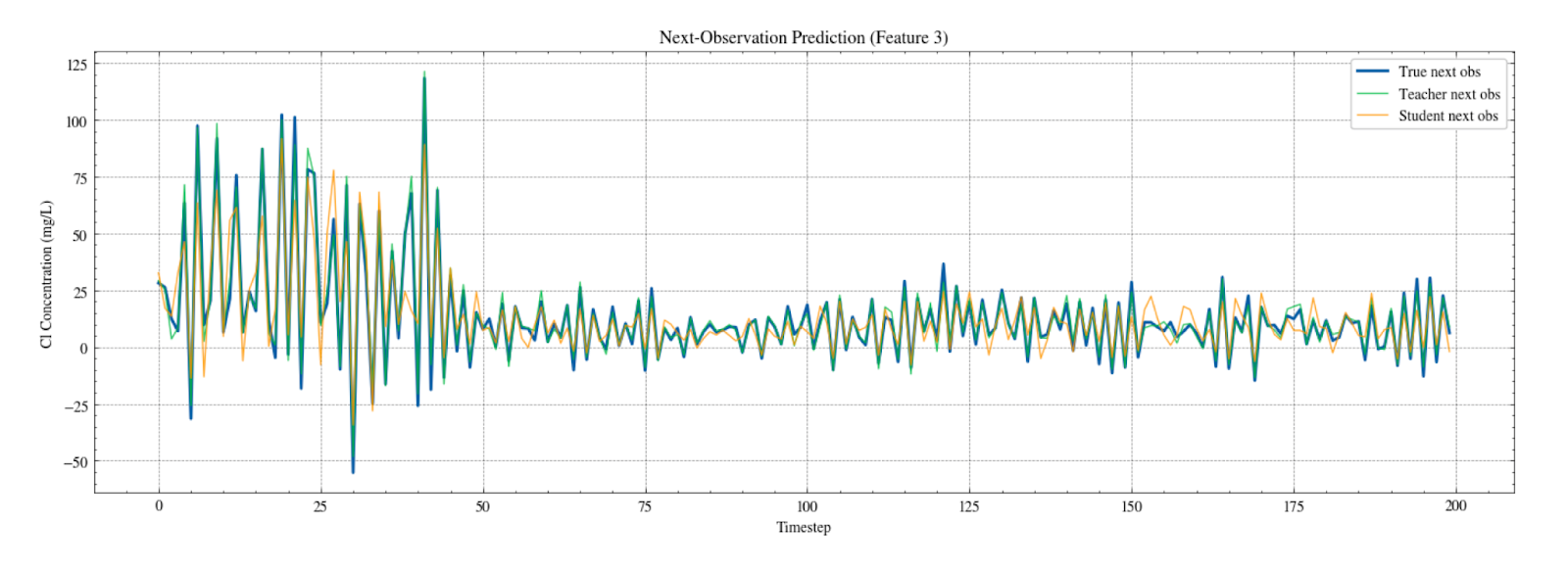

At the core of the approach is a surrogate model that approximates the behavior of the water distribution system. Instead of relying on the full simulator for every evaluation, a neural network is trained to predict how flows and chlorine concentrations evolve over time. This allows candidate strategies to be evaluated efficiently, without sacrificing the ability to model complex system dynamics.

Alongside this model, neural network controllers are evolved to determine how chlorine should be injected across the network. These controllers take as input observations such as flow and concentration measurements, and output control decisions at each timestep. Rather than being trained through gradients, they are improved through an evolutionary process that explores a diverse set of possible strategies.

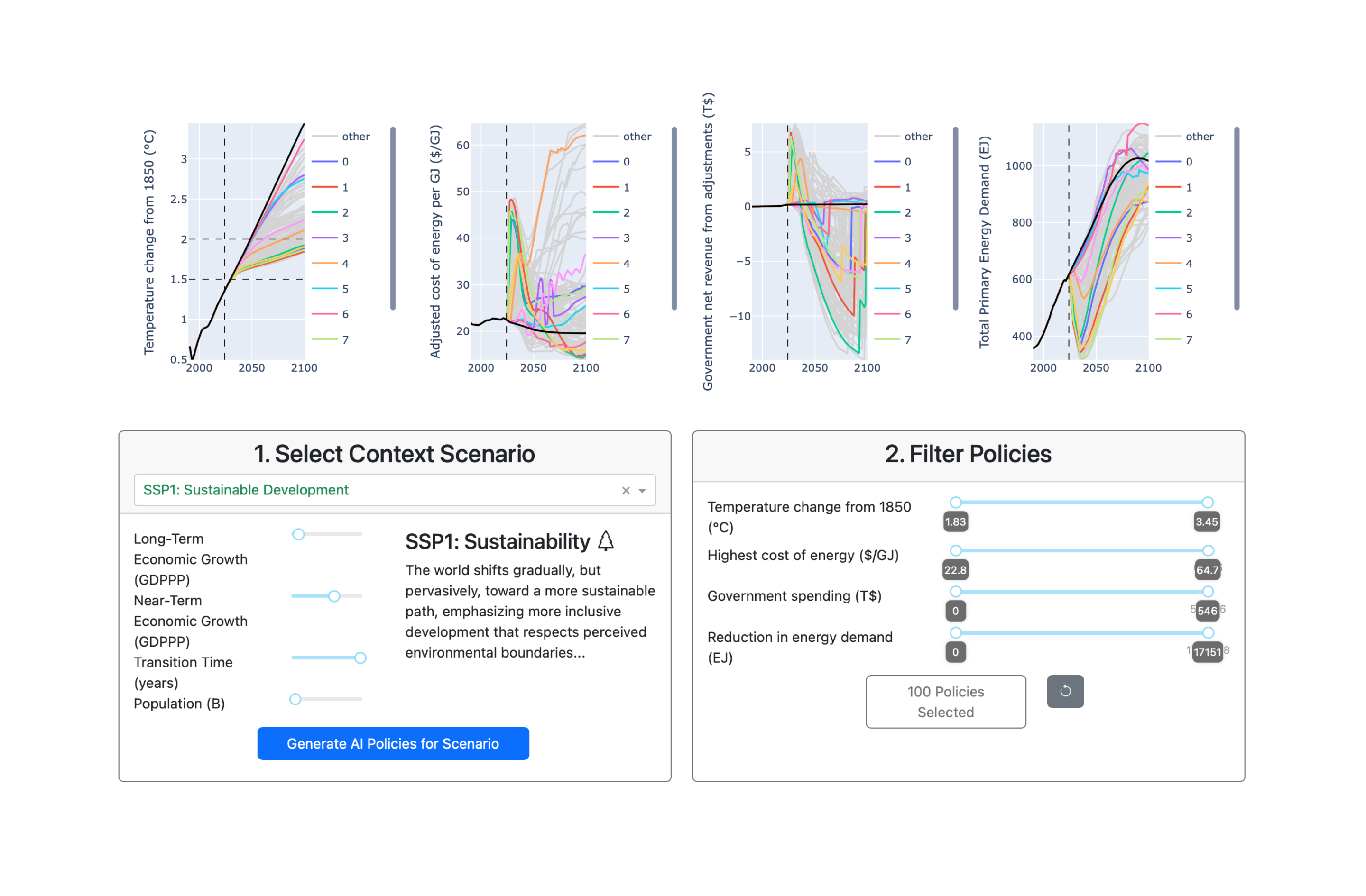

Crucially, these strategies are evaluated across multiple objectives simultaneously. The system must balance keeping chlorine within safe bounds, maintaining consistent concentrations, minimizing total chemical usage, and ensuring stable injection patterns over time. These objectives often conflict, and instead of collapsing them into a single reward, the framework uses multi-objective optimization to discover a range of viable tradeoffs.

These components are connected through an iterative loop. The surrogate model is used to rapidly evaluate candidate controllers. The best-performing strategies are then tested on the full simulator, generating new data that is used to refine the surrogate. Over time, this creates a feedback cycle where both the model and the control strategies improve together.

Figure 1. Water distribution network topology: The water distribution network used in this study, illustrating the complexity of flows, injection points, and monitoring nodes across the system.

Figure 2. ESP optimization loop: The Evolutionary Surrogate-Assisted Prescription (ESP) loop, showing how the surrogate model and evolved controllers are co-optimized through repeated interaction with the simulator.

Key Findings: Stability, Tradeoffs, and Real-World Performance

The results of this approach highlight several important differences from traditional methods.

First, reinforcement learning struggled to produce effective control strategies in this environment. The combination of delayed effects, noisy dynamics, and competing objectives made it difficult to define a stable learning signal. In practice, PPO often converged to trivial or ineffective behaviors, failing to meaningfully control chlorine injection.

In contrast, the evolutionary framework consistently identified solutions that balanced multiple objectives more effectively. Rather than optimizing a single reward, it explored a range of tradeoffs, allowing it to find strategies that maintained safe chlorine levels while reducing cost and improving stability.

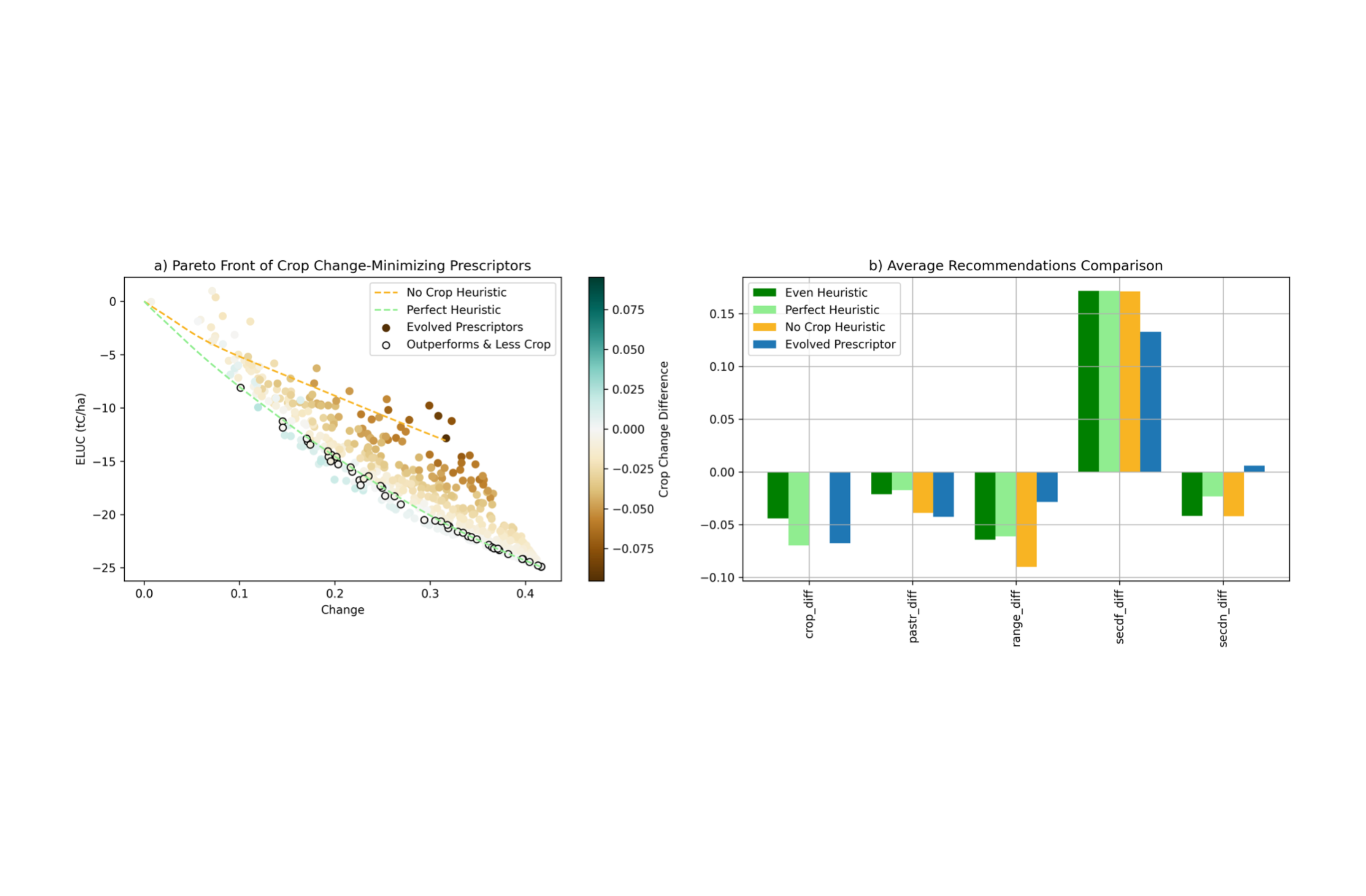

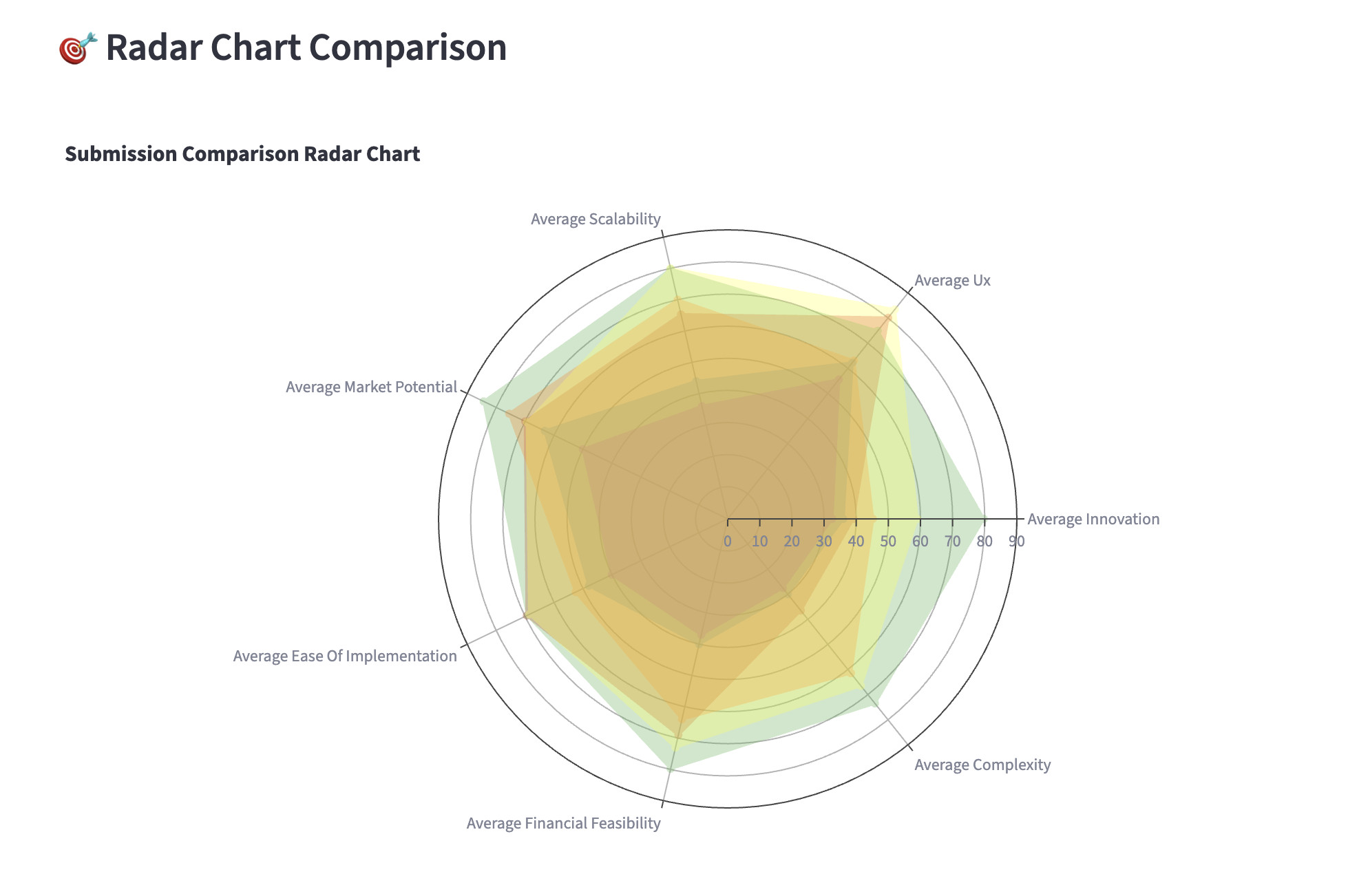

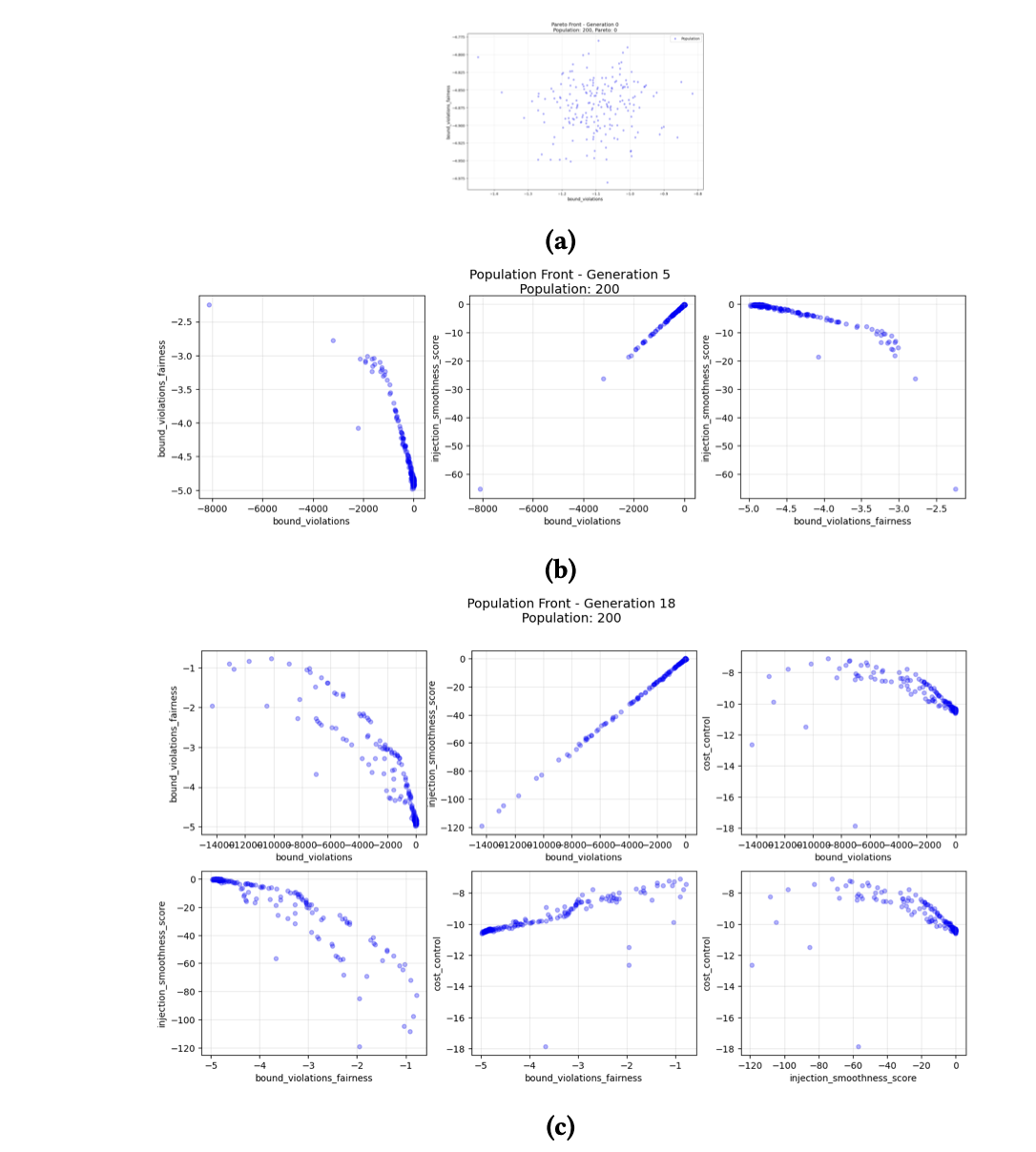

This behavior is best understood through the Pareto fronts produced during optimization.

Figure 3. Surrogate vs. true system behavior: Comparison of surrogate predictions with true simulator values, showing the model can accurately track system dynamics.

These fronts illustrate how the system gradually discovers a spectrum of viable strategies, rather than collapsing to a single solution. This is particularly important in real-world settings, where different operating conditions may require different tradeoffs.

Another key result is the impact of curriculum learning. When all objectives were introduced at once, the system often converged to suboptimal or unstable strategies. By introducing objectives gradually, the framework was able to guide the search more effectively, leading to significantly improved performance, including lower infection risk and more stable control policies.

Finally, the continuous refinement of the surrogate model played a critical role in enabling exploration. As new strategies were evaluated on the simulator, the surrogate became more accurate in previously unexplored regions of the system. This allowed the evolutionary process to discover better solutions over time, rather than plateauing early.

Figure 4. Pareto front evolution: Population fronts as objectives are added, illustrating how the system learns tradeoffs across competing goals.

Together, these results demonstrate that combining surrogate modeling with evolutionary search provides a more stable and effective approach to this class of control problems than traditional reinforcement learning methods.

Why This Matters

This work addresses a class of problems that are both technically challenging and critically important.

Water distribution systems are a foundational part of modern infrastructure, yet they are extremely difficult to optimize. They operate under uncertainty, evolve over time, and require balancing multiple competing objectives that directly impact public health. At the same time, they cannot be safely experimented on in the real world, and the simulations needed to model them are too computationally expensive to support large-scale learning.

These constraints are not unique to water systems. They appear across many real-world domains, from energy grids to transportation networks, where decisions must be made continuously, tradeoffs are unavoidable, and mistakes carry real consequences.

What this research demonstrates is that these challenges can be addressed with a different class of AI systems.

By combining surrogate modeling with evolutionary optimization in a closed learning loop, the framework avoids two major limitations of traditional approaches. It removes the dependence on slow, high-cost simulation for every decision, and it avoids the instability and reward design challenges that often limit reinforcement learning in complex environments.

In the context of water systems, this means it is now possible to design chlorination strategies that better balance safety, cost, and operational stability, improving the reliability of systems that millions of people depend on every day.

More broadly, this work shows how AI can move beyond controlled benchmarks and into real-world infrastructure systems, where environments are noisy, objectives are competing, and direct experimentation is not possible. It provides a practical pathway for applying AI to high-stakes systems where both performance and reliability are essential.

AI engineering expert in decision optimization and multi-agent systems, leading AI for Good initiatives