March 26, 2026

Continuous and Trigger-Based AI Agents: Design Patterns for Multi-Agent Systems

Exploring how continuously running and trigger-activated agents work together to power efficient, distributed AI systems.

Key Takeaways

- AI agents can operate beyond reactive patterns, either by running continuously or activating based on specific conditions (triggers).

- Continuous agents enable autonomous, local decision-making, especially when deployed at edge nodes with access to localized system context.

- Trigger-based execution allows agents to be launched dynamically, making systems more efficient by responding only when conditions are met.

- Hierarchical multi-agent architectures enable both local and global optimization, with leaf agents handling real-time decisions and higher-level agents aggregating broader context.

- Asynchronous communication is essential to coordinate interactions between continuously running and on-demand agents.

- This design pattern supports scalable, low-latency systems, particularly in environments like telecom networks where both speed and coordination matter.

Continuous and Trigger-Based Agent Patterns

While AI agents can be reactive to user inputs, programmatic triggers, or messages from other agents, there are other interesting multi-agent design patterns that call for continuously running agents, and/or launching them if a certain condition is met. In these cases, we would like to also retain the ability to communicate with the agents asynchronously.

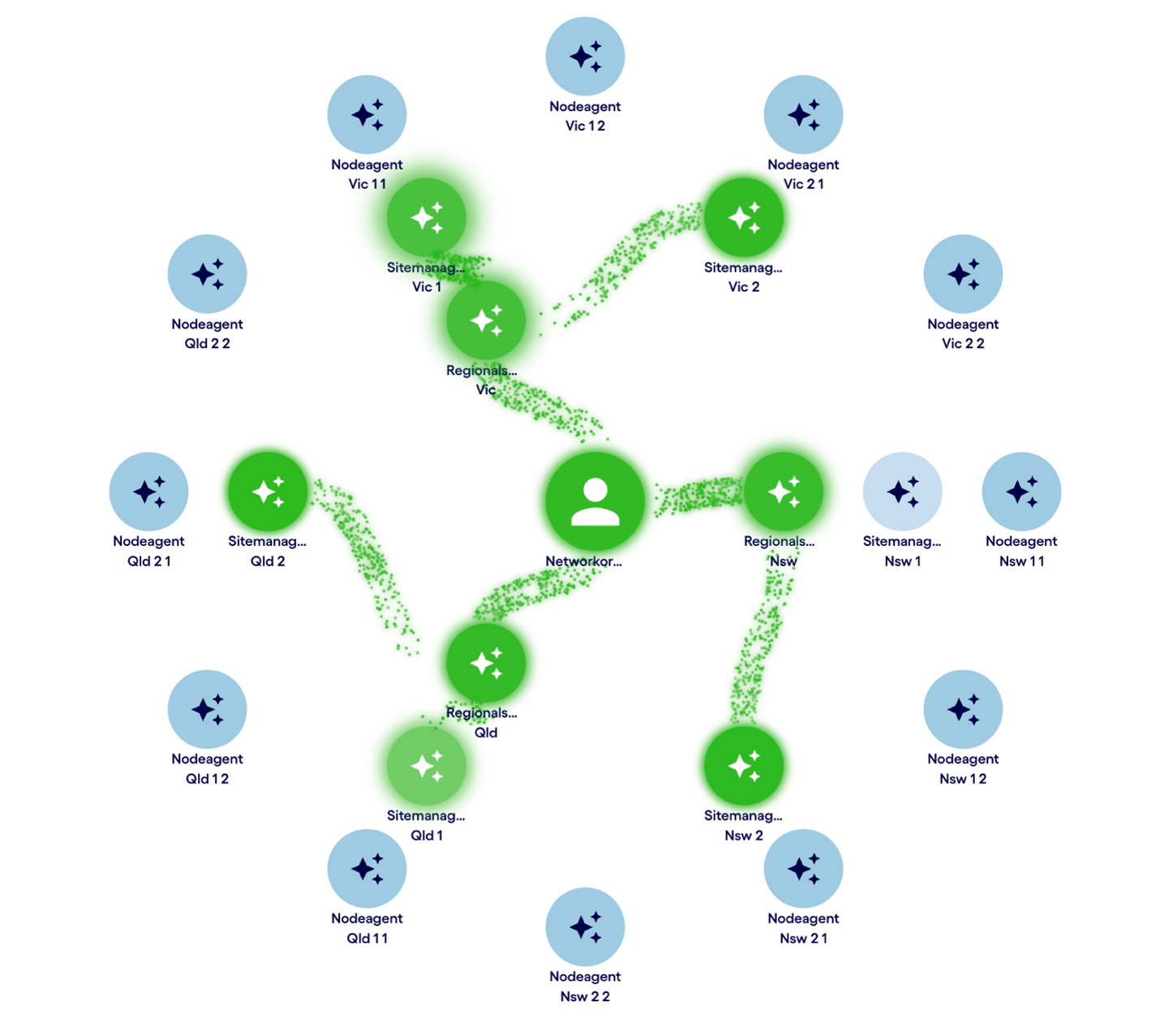

An interesting design pattern emerges when some agents are expected to run proactively and make local decisions autonomously. In figure 1, the leaf-node agents are running entirely on the network nodes. This gives the telco a latency advantage for decisions that need quick response times and can be handled with local knowledge of the node’s operations. This means that the leaf-node agents are running open-source LLMs and there is no need for an API call to hosted LLM for them to operate.

The other agents in the hierarchy, on the other hand, represent consolidation points that take wider regional context into consideration in their decisioning. This is especially useful when regional or system-wide network balancing choices need to be considered.

Example: Multi-Agent Incident Reporting in Telecom Networks

For example, here is an exchange with the network to generate a consolidated report:

User > Give me a report on severe incidents over the past hour and mitigation steps taken.

Agent > Here is a report on severe incidents over the past hour and the mitigation steps taken across various regions:

New South Wales (NSW)

- Temporary Spike in Traffic Latency and Packet Loss:

- Time: 14:15 AEST

- Impact: Temporary spike due to an unexpected surge in data traffic.

- Mitigation Steps:

- Traffic rerouted through alternate pathways.

- Dynamic load balancing implemented.

- Additional CPU and memory resources allocated.

- Result: Situation stabilized, normal operations resumed.

- High CPU Load:

- Time: 10:15 AM

- Impact: CPU load spiked to 90%, causing potential slowdowns.

- Mitigation Steps: Non-essential processes paused, and traffic rerouted.

- Result: CPU load normalized within 10 minutes.

- Increased Packet Loss:

- Time: 10:45 AM

- Impact: Packet loss increased to 15%.

- Mitigation Steps: Bandwidth adjusted and additional capacity allocated.

- Result: Packet loss reduced to under 2% within 5 minutes.

Victoria (VIC)

- Spike in Traffic Latency:

- Time: 14:30

- Impact: Due to increased load from local events.

- Mitigation Steps: Rerouting and additional bandwidth allocation.

- Result: Latency returned to normal within 15 minutes.

- Packet Loss and CPU Load Increase:

- Time: 15:00

- Impact: Due to a malfunctioning switch and application.

- Mitigation Steps: Faulty switch isolated, traffic redirected, and application throttled.

- Result: Packet loss reduced and CPU load normalized.

Queensland (QLD)

- Spikes in Network Latency and Packet Loss:

- Impact: Due to surges in traffic from events.

- Mitigation Steps: Rerouting, bandwidth optimization, and increased resource allocation.

- High CPU Load:

- Times: 10:15 AM and 11:23 AM

- Mitigation Steps: Redistributed tasks and scheduled task to clear processes.

- Increased Traffic Latency:

- Time: 10:45 AM

- Description: Due to a routing loop.

- Mitigation Steps: Temporary route bypass.

- Increased Packet Loss on Brisbane West link:

- Time: 11:45 AM

- Mitigation Steps: Adjusted routing protocols.

Overall, all incidents have been effectively managed and network performance is returning to normal levels. Monitoring has been intensified to prevent recurrence.

Example: Top-Down Network Rebalancing with Agents

A different usage for this system is to give top-down network rebalancing commands. For example:

User > There will be a high bandwidth event in the Sydney area tomorrow from 4-5pm. Suggest load rebalancing steps based on current and predicted network status.

Agent > Here are the suggested load rebalancing steps for the high bandwidth event in the Sydney area tomorrow from 4-5 pm, based on current and predicted network status:

Current Network Status:

- Node SYD01: CPU load at 65%, Memory load at 60%, Latency at 23ms, Packet loss at 0.5%.

- Node SYD02: CPU load at 70%, Memory load at 62%, Latency at 21ms, Packet loss at 0.4%.

- Node SYD03: CPU load at 55%, Memory load at 58%, Latency at 19ms, Packet loss at 0.3%.

Predicted Network Status (4-5pm):

- Anticipated increase in CPU and memory loads by 20% due to the event.

Load Rebalancing Steps:

- Node Prioritization: Temporarily prioritize traffic through Node SYD03, as it currently has the lowest load and latency.

- Traffic Redistribution API Call: Use the API call api/v1/traffic/distribute to adjust traffic loads. Parameters: { "source": "SYD01,SYD02", "target": "SYD03", "percentage": "20" }.

- Dynamic Load Adjustment Protocol: Implement protocol DLA-2023 to dynamically adjust traffic distribution based on real-time load metrics.

- Backup Node Activation: Prepare Node SYD04 as a backup to handle any unexpected load spikes. API call: api/v1/node/activate with parameters { "node": "SYD04" }.

Monitoring and Reporting:

- Continuously monitor the CPU, memory, and latency metrics during the event.

- Report any anomalies to the SiteManager immediately through the monitoring system.

Post-Event Evaluation:

- After the event, use api/v1/network/rebalance to revert any temporary adjustments and restore normal operating conditions.

These steps are designed to effectively manage the increased load during the event and ensure stable network performance.

Extending the Pattern: Agriculture and Utility Systems

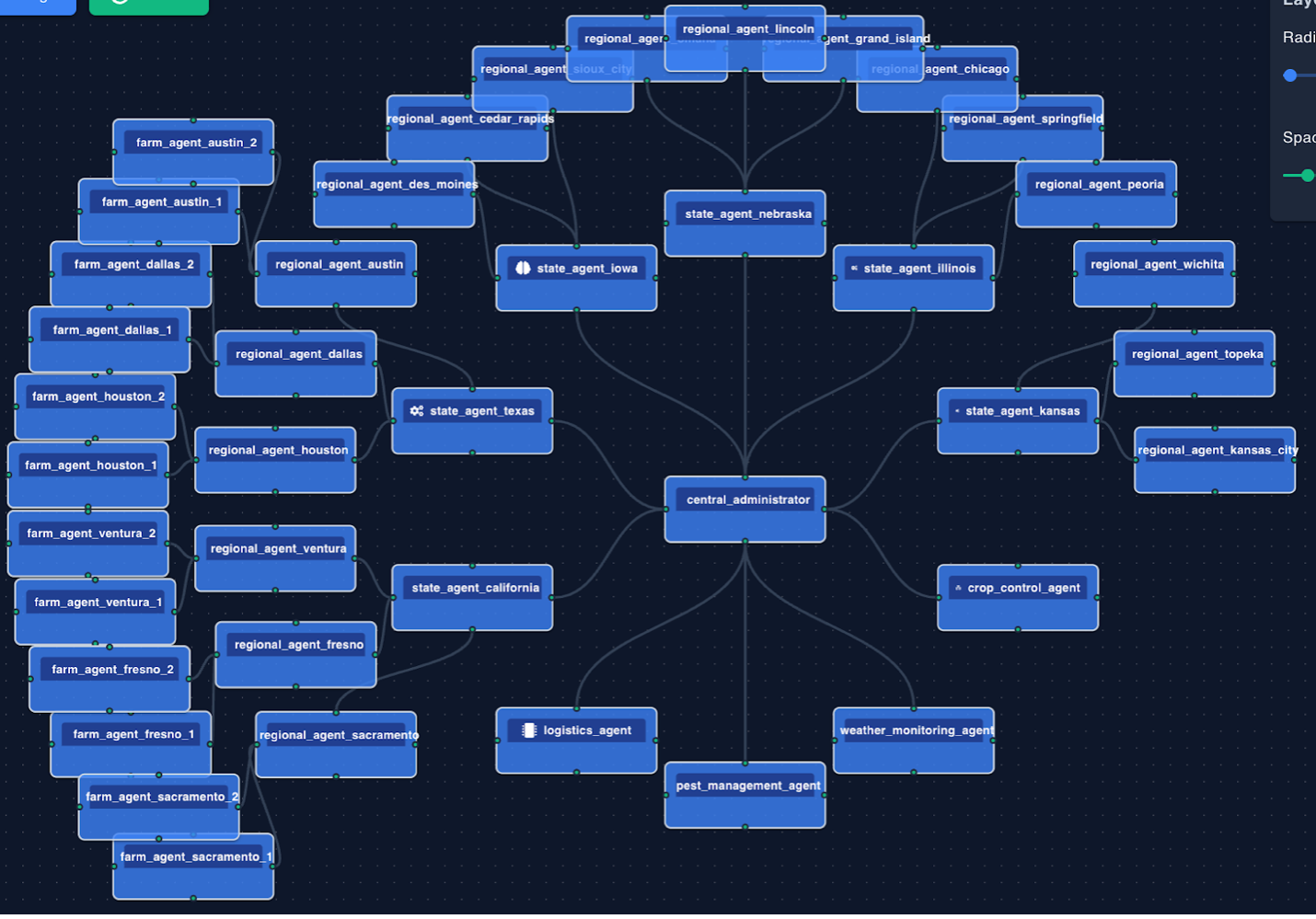

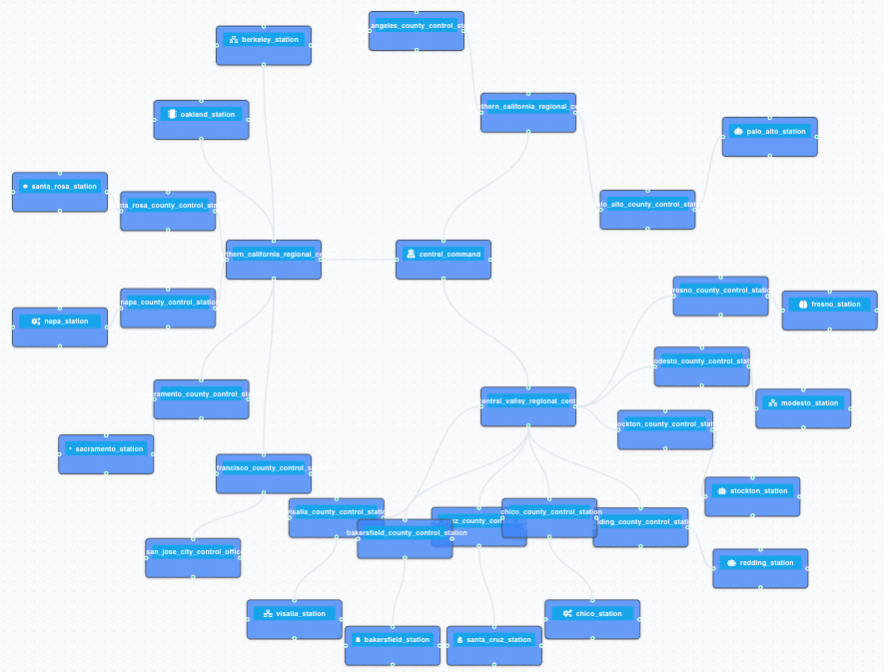

Incidentally, the same multi-agent design pattern can be used for use-cases where the frequency of decision making is not as high. Figure 2, for example, shows such a multi-agent system for an agriculture business, with agents running proactively and semi-autonomously in the farms, reporting through a regional and state hierarchy back to the central command, and figure 3 shows the same pattern repeated in for a utility company.

Figure 2. A multi-agent system for a multi-farm agriculture business.

Figure 3. An agent network for a power utility company in California.

Implementing Continuous and Trigger-Based Agents with neuro-san

So how can we implement such multi-agentic systems? In neuro-san, agent networks can easily be defined in config files, and even using a vibing editor (which is itself multi-agentic). This platform easily allows you to call agents from code and have agents call your code, and so building design patterns like that of the above is quite straight forward.

I have created a small runnable example of how to build such a system here. The system runs neuro-san agents in a periodic loop via a separate LoopRunner service that can be controlled via a neuro-san agent. Users or other agent networks can asynchronously send messages to agents running in these loops. The wrapper also allows for target agent networks to be launched or awakened if a given condition is met.

To try it out, install and follow the README instructions that will show you how to run the neuro-san server serving up the control agent network, the LoopRunner process that waits for messages to trigger, run, or wake up agents, and a simple CLI for sending commands to the LoopRunner using an agent network called loopy_manager.

As a minimal example, we have included a loopy_echo agent network, that increments a counter in sly_data. The following command to the loopy_manager through the CLI will start this agent network in a loop, and run it every 10 seconds:

start demo basic/loopy_echo 10 tickThis basically means run the agent network defined in the basic/loopy_echo.hocon definition file every 10 seconds and pass it the command ‘tick’ every time you run it.

You can then communicate with the live loop_echo agent asynchronously by giving commands like the ones below using the CLI:

send demo what is the counter?

send demo hello

send demo reset the counter

send demo what is the counter?

stop demoThe setup also allows you to load and run agents upon a condition being met. For this, we’ve provided a handy class under apps.loopy_runner.triggers that contains a few generic conditions. Feel free to add to them in your implementations. One such trigger is every_n_ticks, so you can use the same loopy_echo example above, but have it awakened, in case of the example below, every 3 ticks (or 30 seconds):

start demo basic/loopy_echo 10 tick \

trigger_method apps.loopy_runner.triggers.every_n_ticks \

trigger_args {"n":3}A more practical example would be the use of the contains_keyword trigger:

start sensor1 basic/loopy_echo none

trigger_method apps.loopy_runner.triggers.contains_keyword

trigger_args {"keywords":["alert"]}In this case, the loopy_echo is given to the LoopRunner, but it is not launched until and unless the keyword “alert” is spotted in a message. For example, loopy_echo will be called if the following message is received:

signal sensor1 {"message":"temperature alert in zone 4"}But it will not be triggered if the message below is sent:

signal sensor1 {"message":"all clear"}Hopefully the implementation above, gives you a simple way to design powerful multi-agent systems, with a variety of agentic execution modes.

Babak Hodjat is the Chief AI Officer at Cognizant and former co-founder & CEO of Sentient. He is responsible for the technology behind the world’s largest distributed AI system.